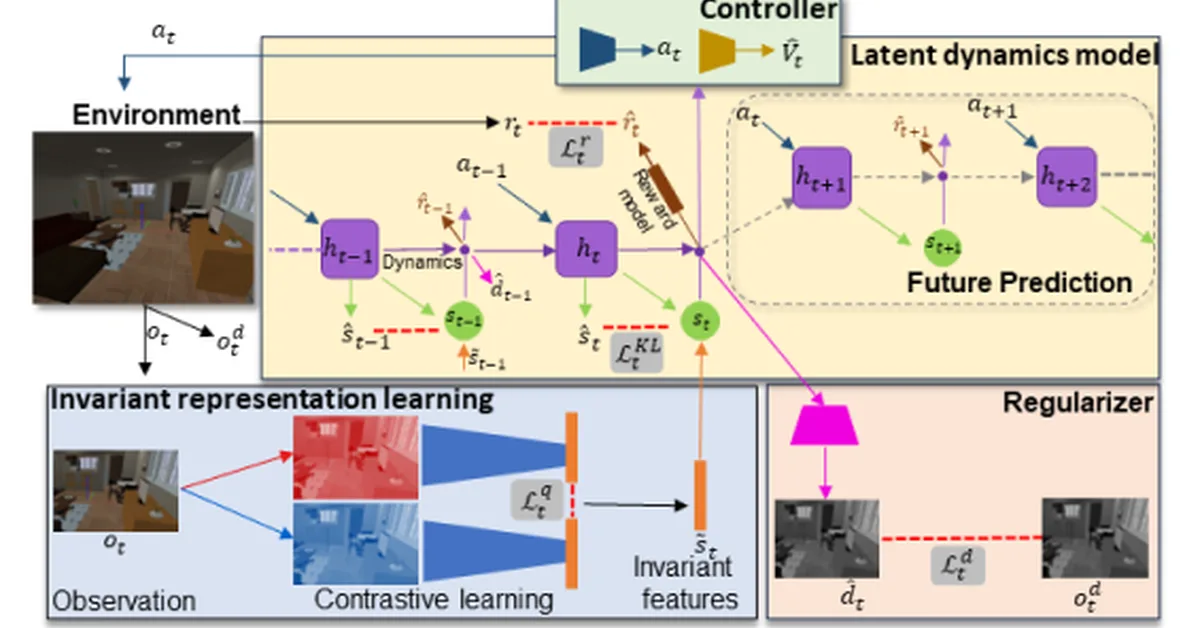

Researchers propose an Evaluation Agent (EA) to assess decision quality in AI-driven AutoML processes beyond just final outcomes, focusing on validity, consistency, risk assessment, and counterfactual impacts. This shift towards decision-centric evaluation is crucial for enhancing reliability and interpretability of autonomous machine learning systems, providing content creators with a robust framework to audit AI agent decisions.

Read the full article at arXiv cs.AI (Artificial Intelligence)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.