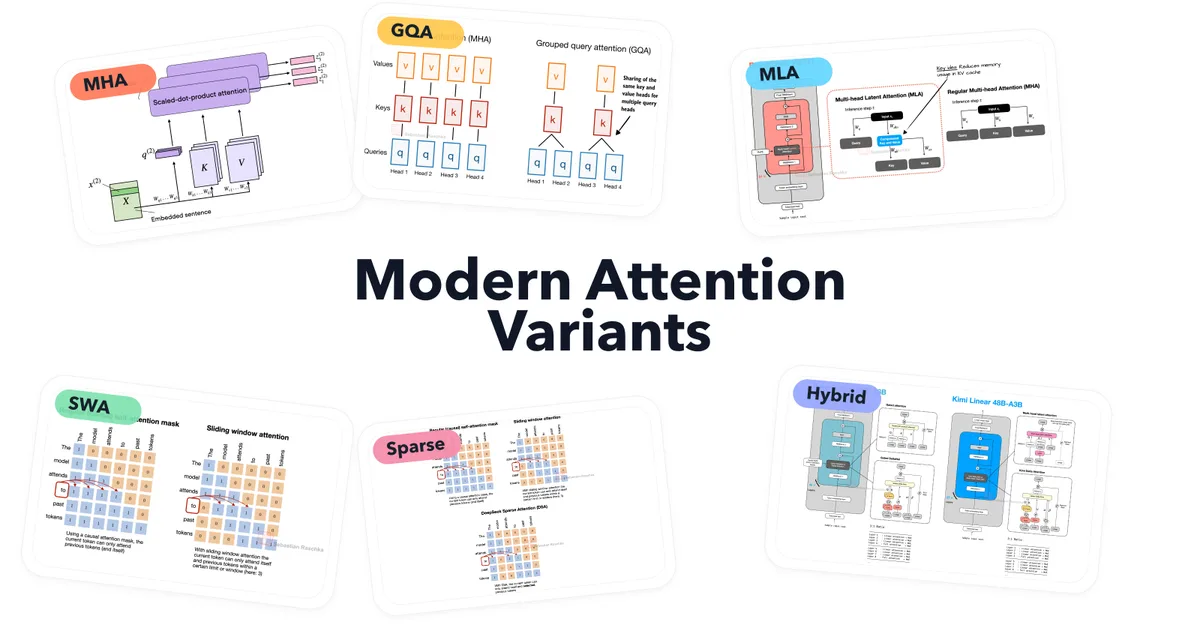

Grouped Query Attention (GQA) became popular as a cost-effective replacement for classic multi-head attention (MHA), offering significant savings in key-value cache storage without major implementation changes. By reducing the number of key-value heads and sharing them across multiple query heads, GQA balances modeling quality with efficiency, making it particularly useful for longer sequence lengths. This approach is especially beneficial for labs aiming to reduce costs while maintaining model performance, positioning GQA as a new standard in large language models (LLMs).

Read the full article at Ahead of AI

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.