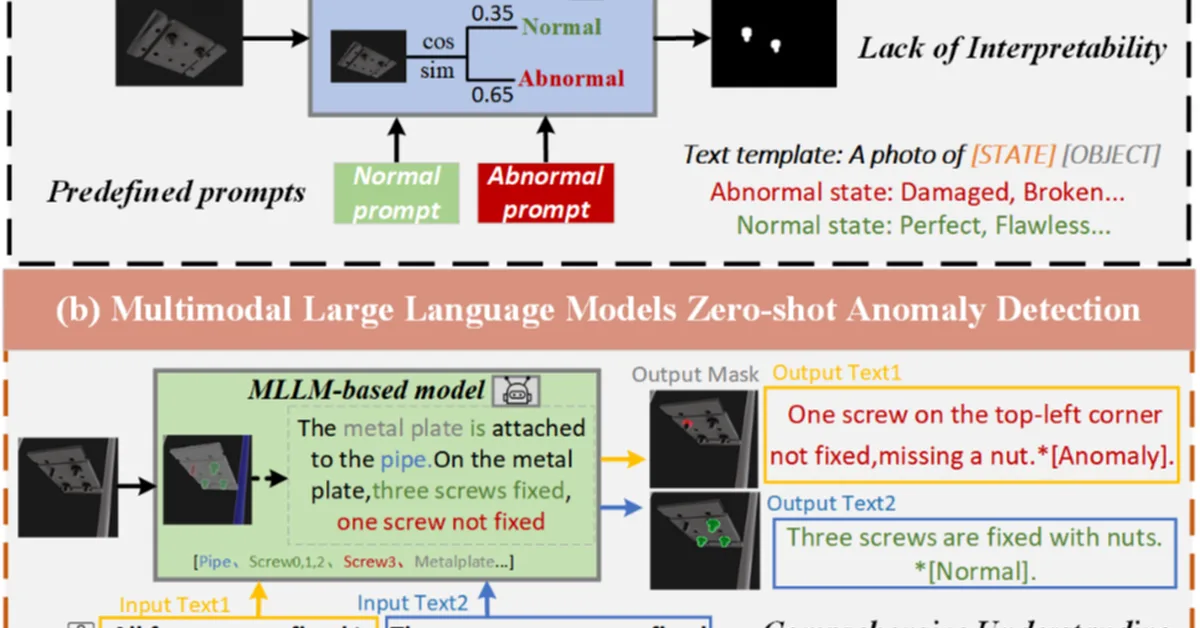

Researchers introduced AG-VAS, a new framework for zero-shot visual anomaly segmentation using large multimodal models enhanced with semantic anchor tokens to improve task generalization and precise localization of anomalies. This advancement is crucial for content creators as it enables more accurate detection and description of anomalous patterns in images without extensive training data.

Read the full article at arXiv cs.AI (Artificial Intelligence)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.