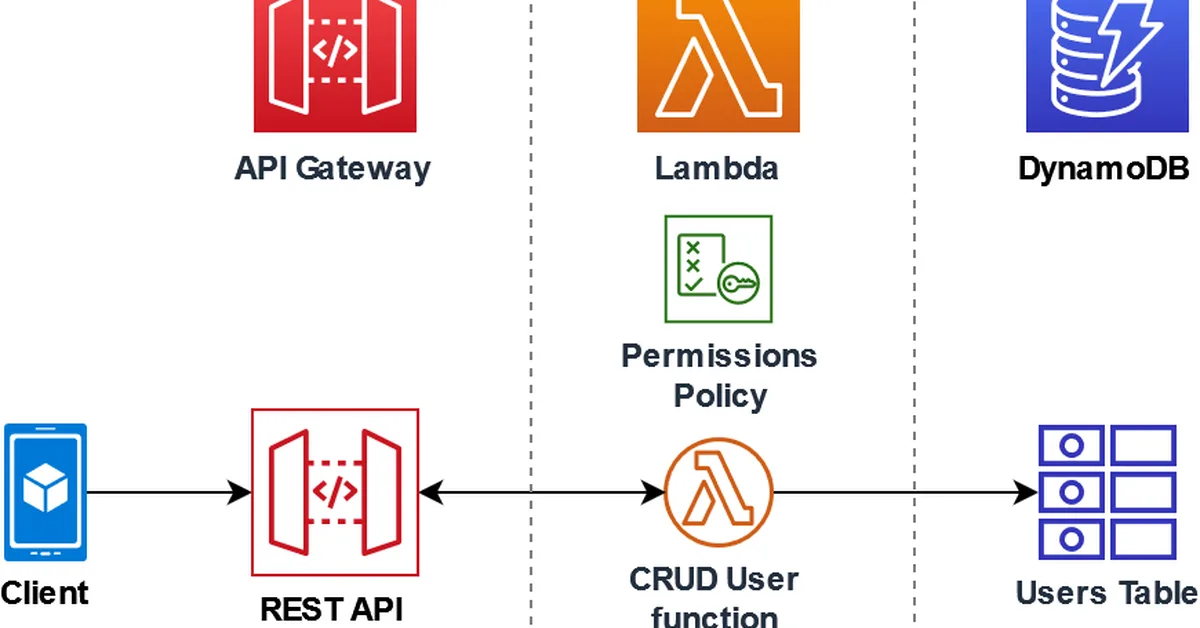

A proposed web standard called "agents.txt" aims to provide guidelines for AI agents on actions they can perform and under what conditions, addressing limitations of the existing robots.txt which only controls web crawling. This new standard is crucial as it offers content creators control over how AI interacts with their websites, including permissions for training, API access, and specific agent identity tiers, thereby enhancing security and legal compliance.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.