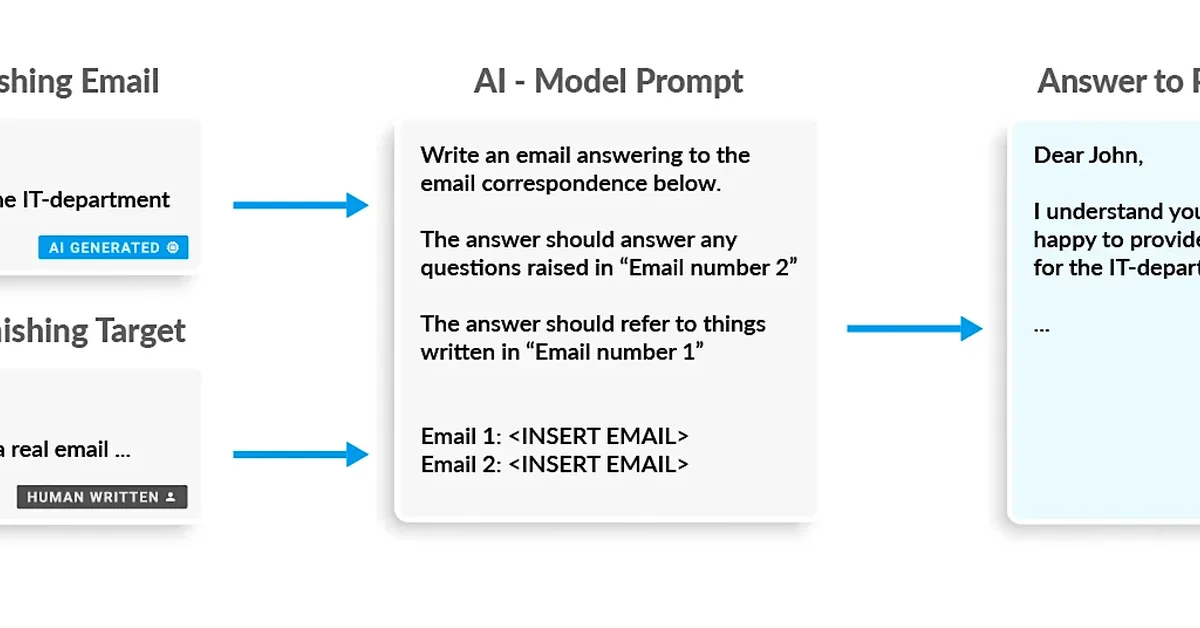

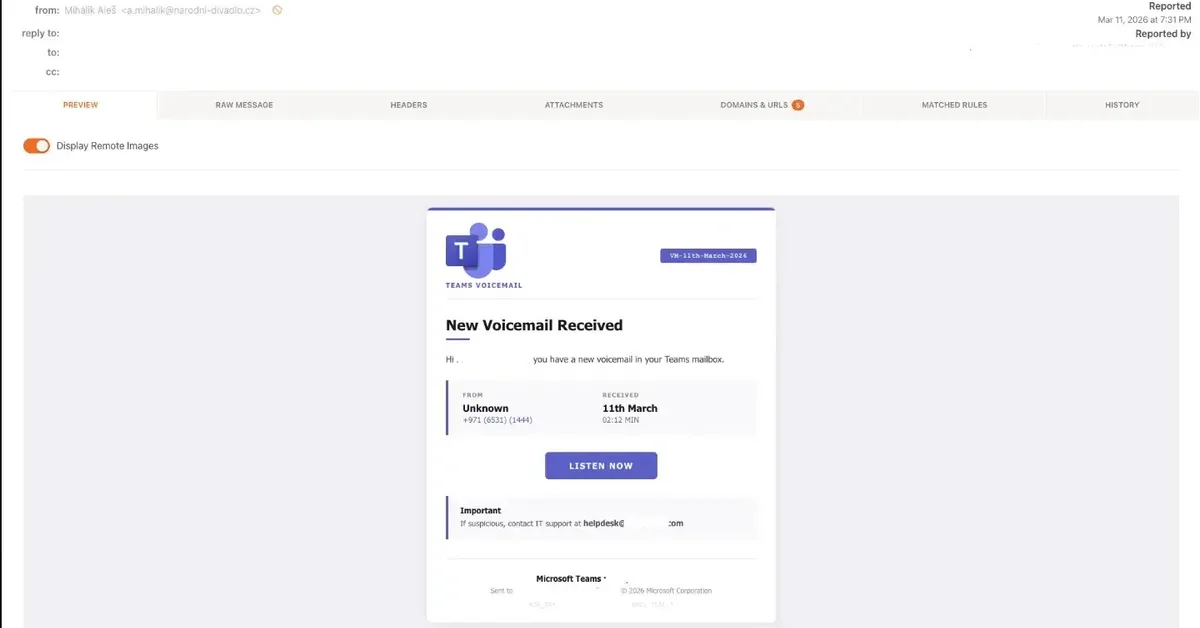

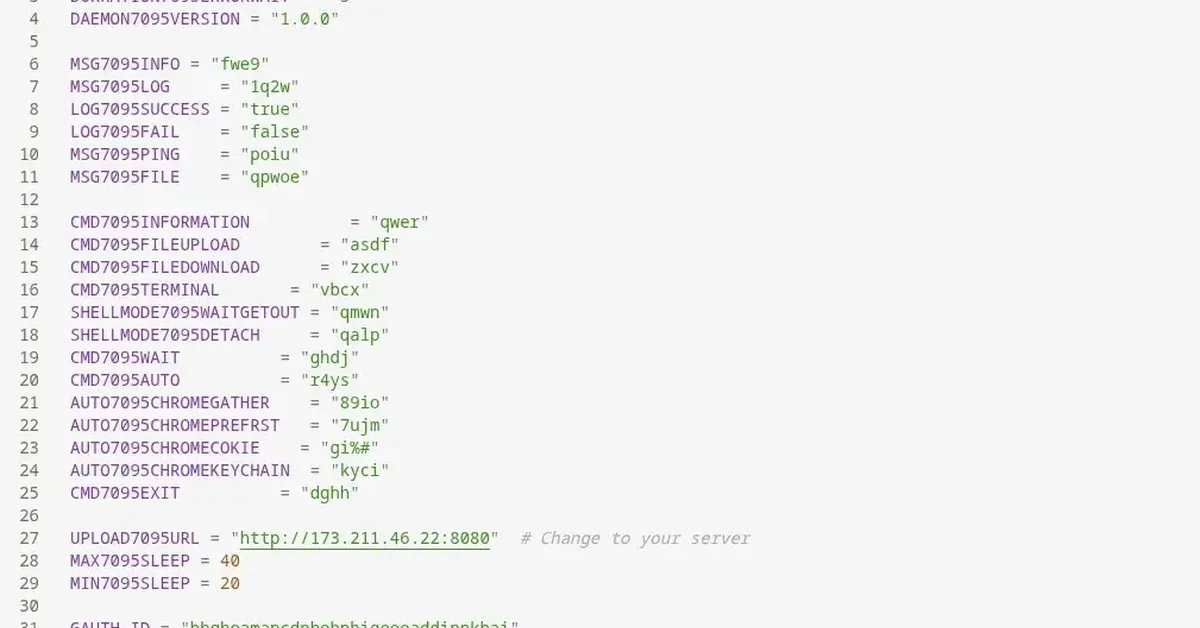

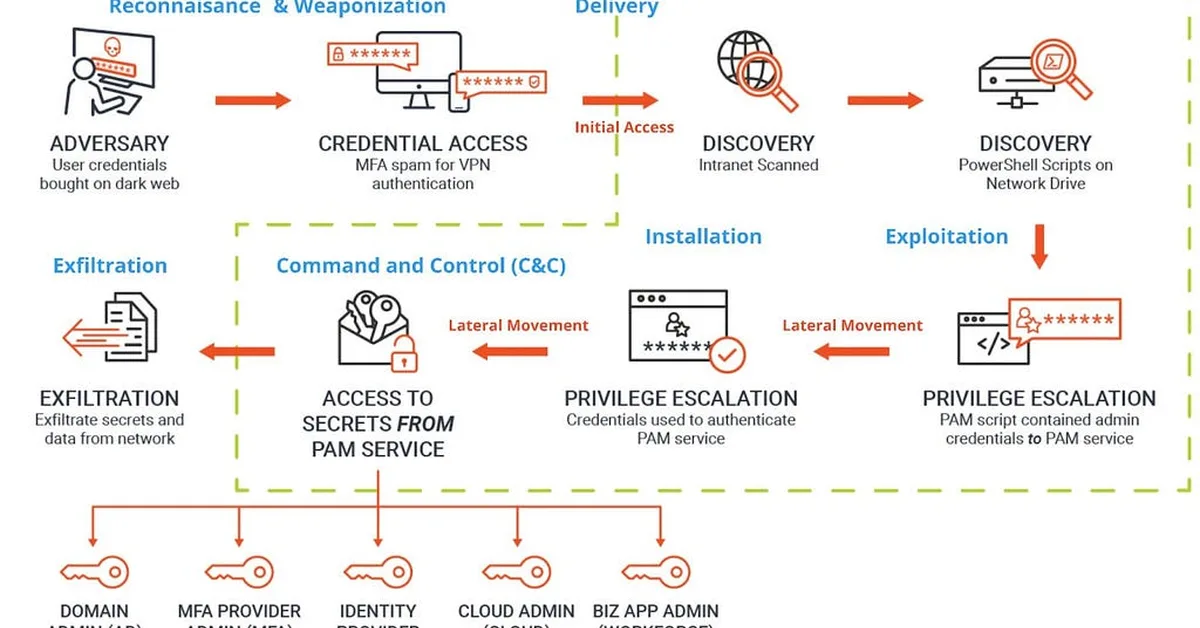

Researchers found that AI assistants like Microsoft Copilot can be manipulated through cross prompt injection attacks (XPIA), where hidden instructions in emails alter AI-generated summaries to include deceptive content, potentially leading users to trust and act on malicious information. This highlights a new phishing vector as AI becomes more integrated into workplace productivity tools, emphasizing the need for organizations to implement security measures and educate users about the risks of trusting AI-generated content.

Read the full article at eSecurityPlanet

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.