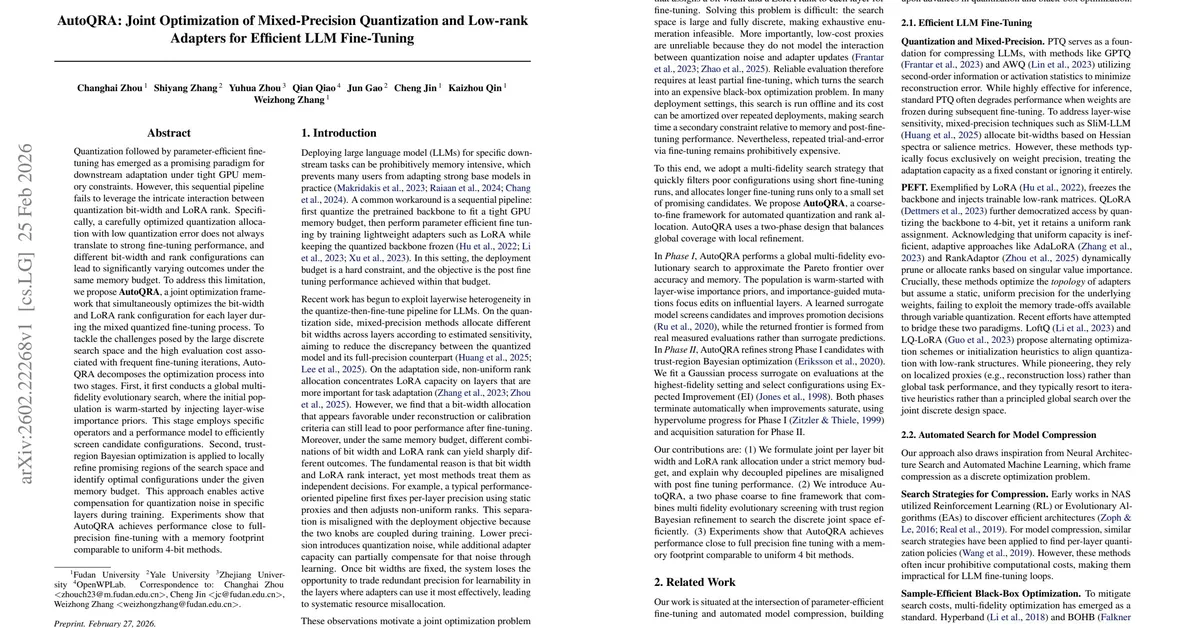

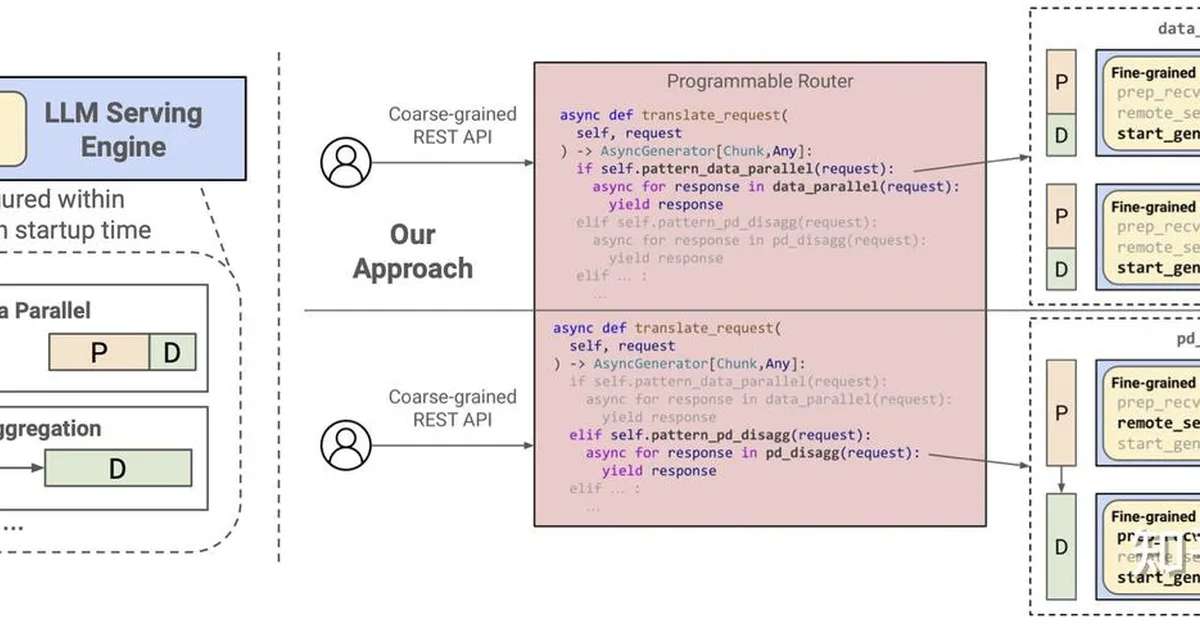

Researchers introduced AutoQRA, a joint optimization framework for mixed-precision quantization and low-rank adapters in large language model fine-tuning, addressing the limitations of sequential pipeline approaches. This method optimizes both bit-width and LoRA rank configurations simultaneously, leading to performance close to full precision with reduced memory usage, offering significant benefits for content creators working under GPU memory constraints.

Read the full article at arXiv cs.LG (ML)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.