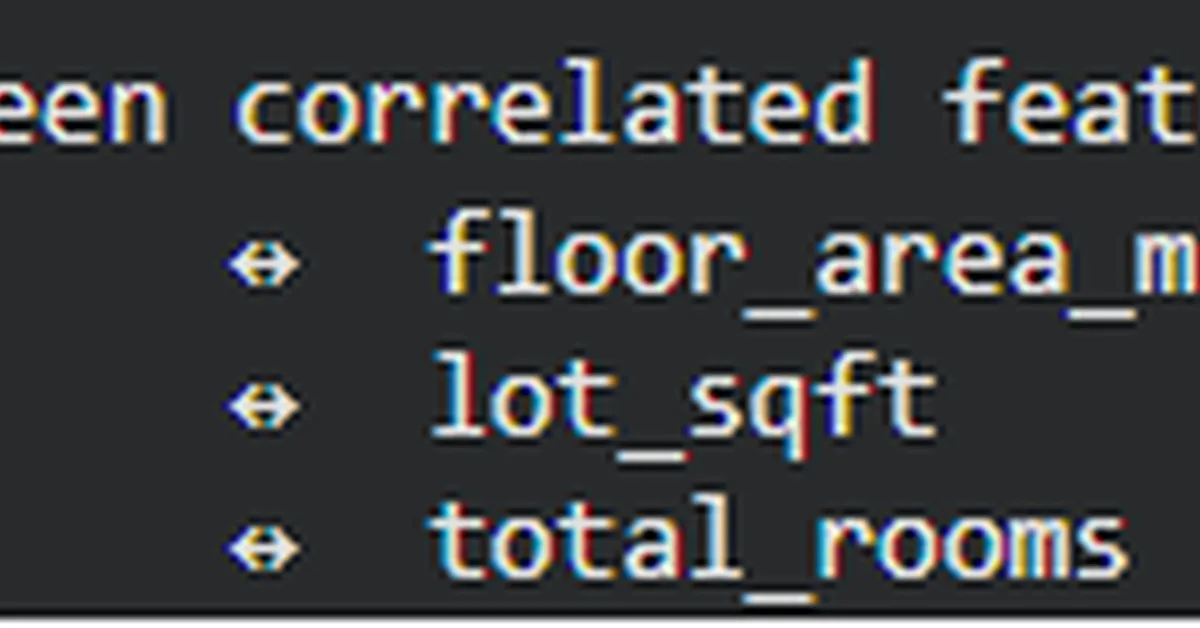

The experiment highlights issues with model complexity in machine learning: signal-to-noise ratio degradation, coefficient instability across retraining cycles, and increased sensitivity to data drift. It demonstrates that models incorporating many correlated or irrelevant features exhibit higher variability in learned coefficients and are more sensitive to changes in input distributions compared to leaner models using only high-signal features.

Read the full article at MarkTechPost

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.