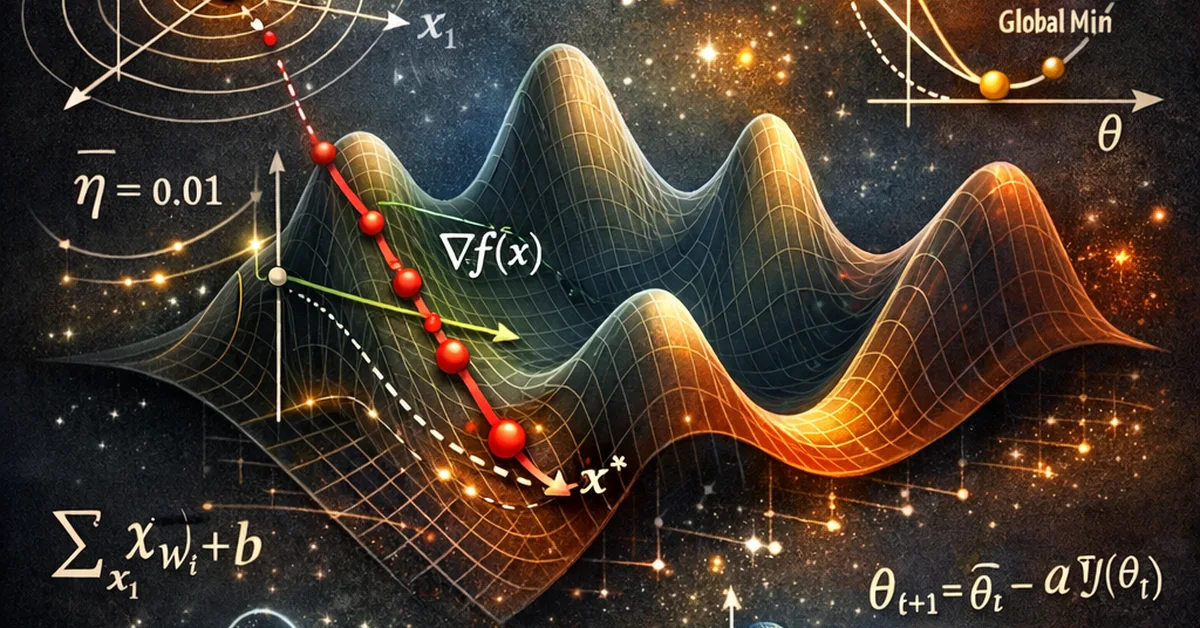

This article delves into the mechanics of Gradient Descent (GD) in machine learning, explaining its importance for optimizing model parameters and minimizing loss functions. It covers three types of GD: Batch, Stochastic, and Mini-Batch, detailing their implementation from scratch using Python and NumPy. The piece also discusses common pitfalls such as feature scaling issues, non-convex loss functions, and inappropriate learning rates, offering solutions like applying scalers before fitting models and adjusting learning schedules. Additionally, it provides visual guides for interpreting GD performance through loss curves and contour paths, emphasizing the importance of understanding these plots to ensure model convergence and efficiency.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.