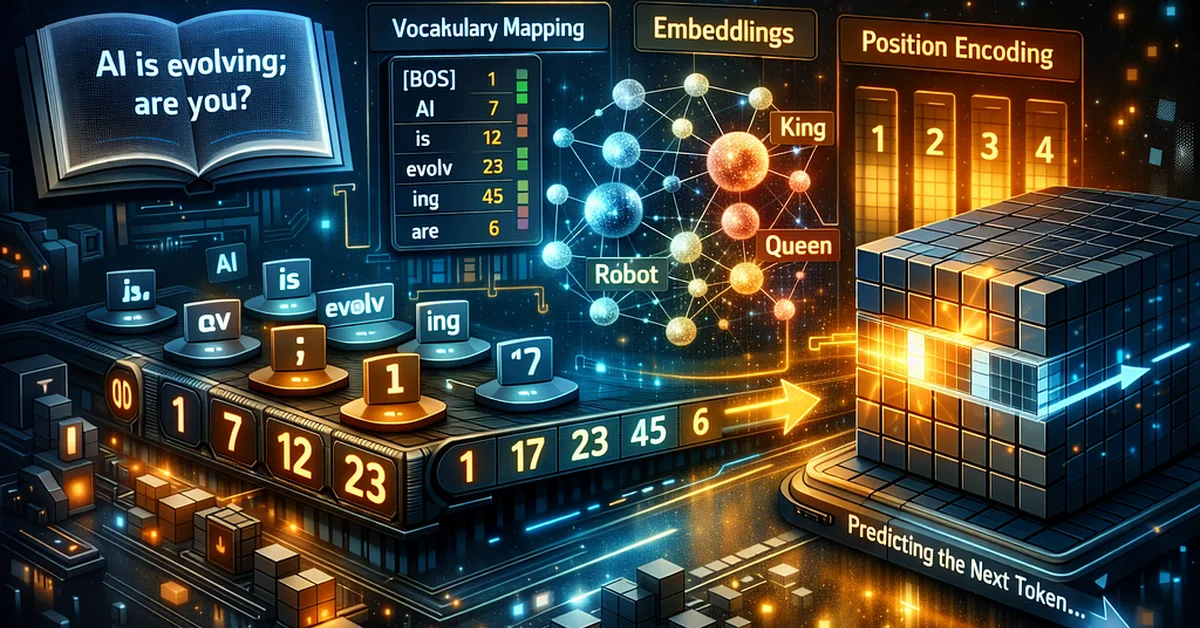

The article explains how large language models (LLMs) process text through a series of mathematical transformations: tokenization, vocabulary mapping, embedding into high-dimensional vectors, positional encoding, and batching for training. Understanding these steps is crucial for content creators as it reveals the technical underpinnings of LLMs' ability to generate human-like responses.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.