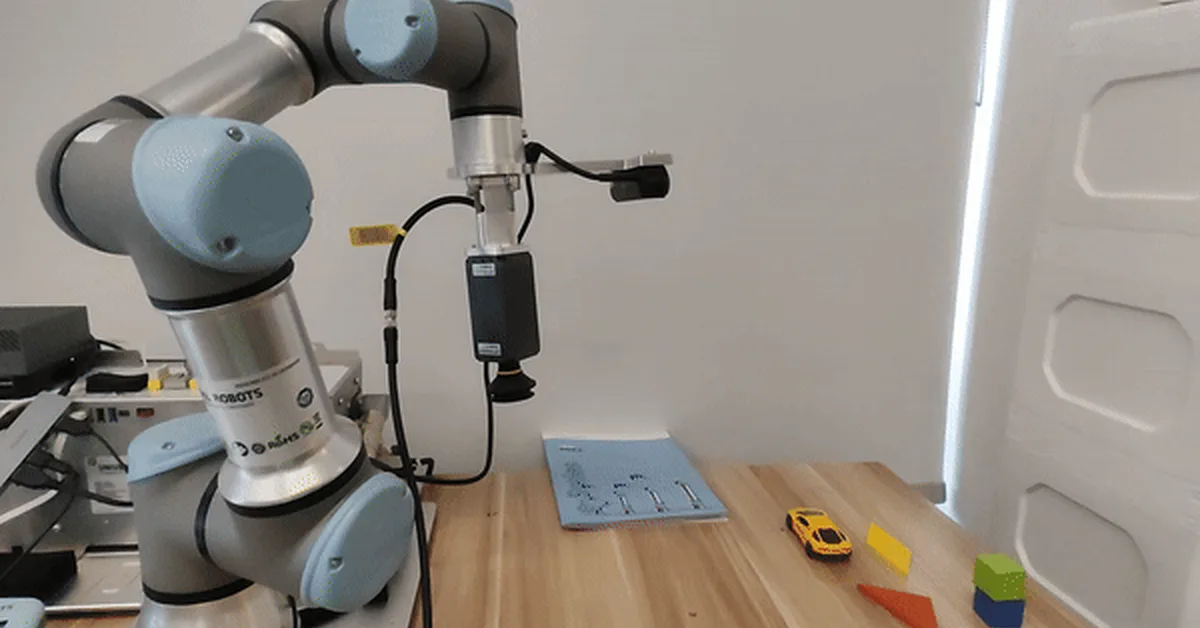

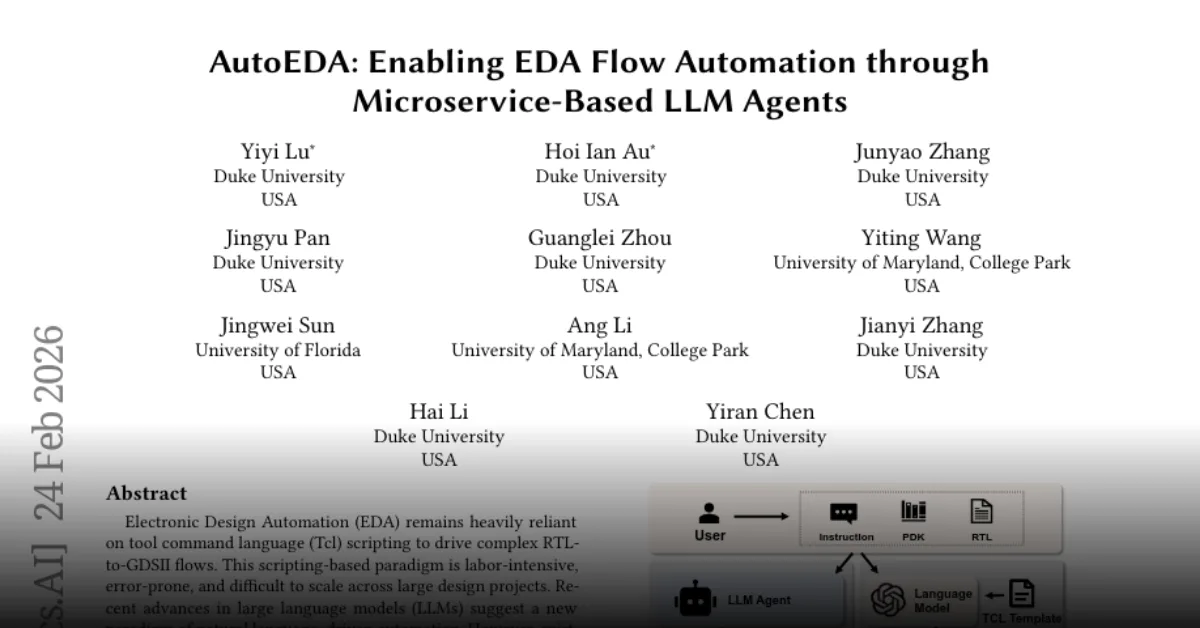

Researchers introduced BFA++, a hierarchical token pruning framework for Vision-Language-Action (VLA) models that enhances computational efficiency without sacrificing performance by preserving task-relevant visual cues from multi-view inputs. This advancement is crucial for real-time robotic manipulation, demonstrating improved success rates and faster inference compared to existing methods, making it essential for content creators focusing on VLA applications in robotics.

Read the full article at arXiv cs.CV (Vision)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.