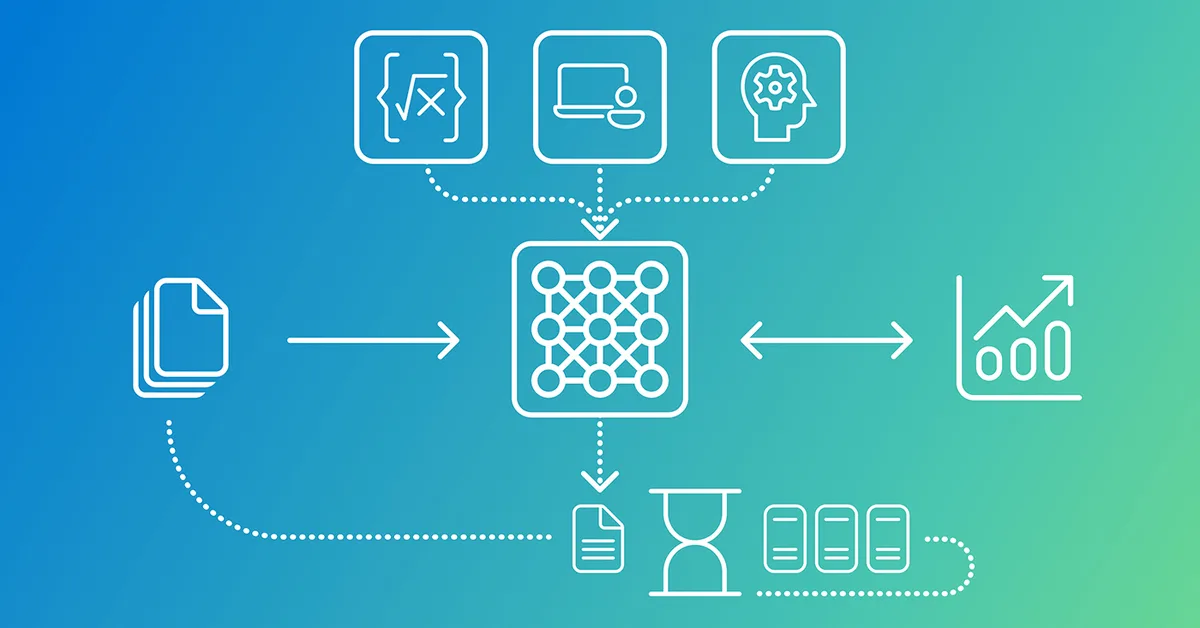

This article outlines four patterns for implementing Retrieval-Augmented Generation (RAG) systems using LangChain and LLMs:

- Chunking Strategy: Adjust chunk size and overlap to balance between information density and context relevance.

- Embedding Choice: Use dense embeddings like Sentence Transformers or sparse embeddings based on TF-IDF, depending on the dataset's characteristics.

- Prompt Engineering: Design prompts that instruct models to use retrieved documents without relying on external knowledge, ensuring answers are grounded in provided data.

- Evaluation Techniques: Implement minimal evaluation for retrieval effectiveness and consider advanced layers like answer correctness, faithfulness, and retrieval relevance for production systems.

These patterns help in building effective RAG systems tailored to specific datasets and requirements.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.