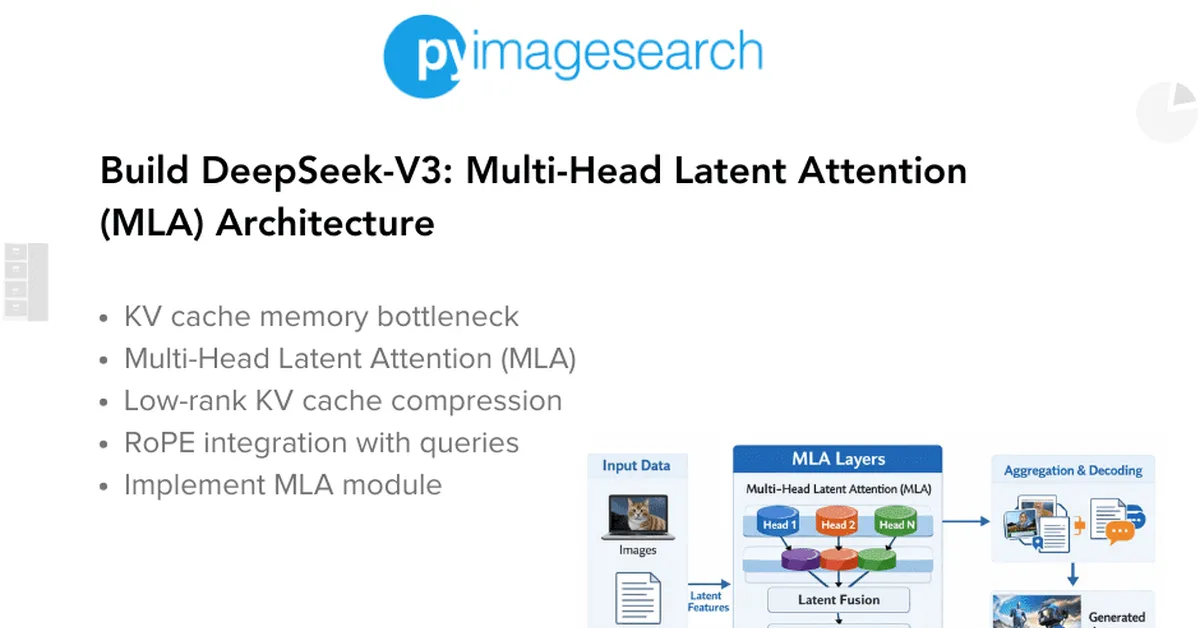

The Multihead Latent Attention (MLA) is an advanced attention mechanism designed to enhance efficiency in transformer models through compression/decompression of queries and key-values, LoRA-style low-rank projections for computational savings, and RoPE with separate content and positional embeddings. It integrates causal masking for autoregressive tasks, ensuring tokens attend only to past positions while incorporating both content similarity and positional information into attention scores. The mechanism applies a residual connection after dropout regularization on the output, contributing to improved model performance in language modeling tasks.

Read the full article at Blog - PyImageSearch

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.