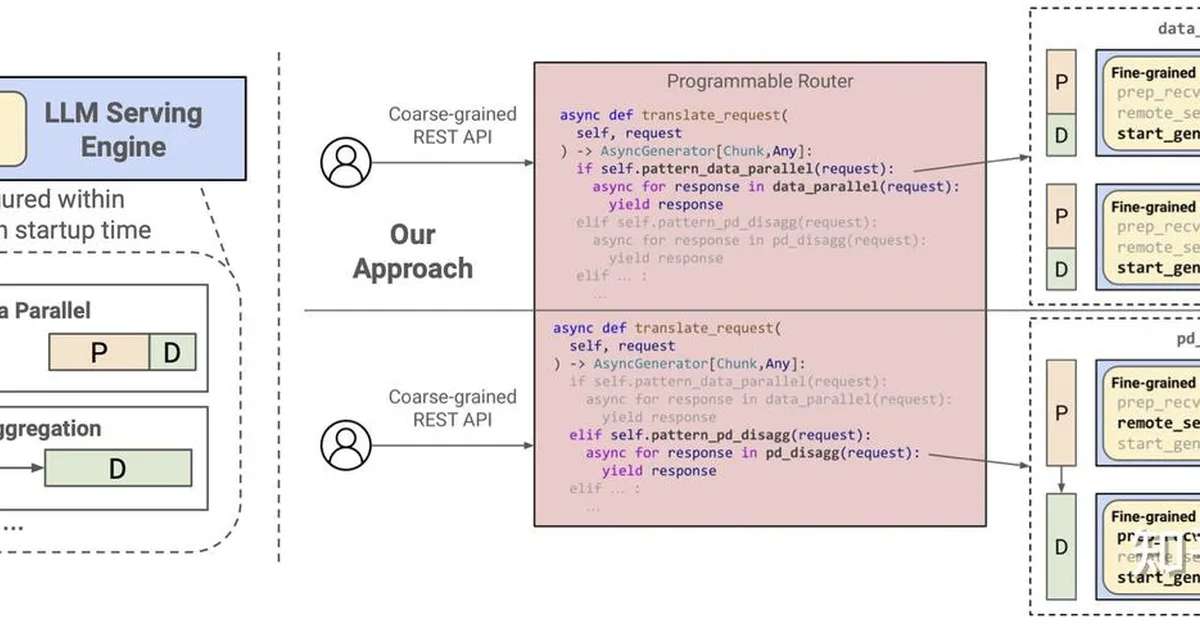

Researchers have characterized the variability in energy and performance trade-offs during large language model (LLM) inference across different workloads and GPU scaling, finding that lightweight semantic features better predict inference difficulty than input length alone. The study highlights significant energy savings—up to 42%—by reducing GPU frequency with minimal latency increase, suggesting future systems should consider workload-aware model selection and phase-specific hardware adjustments for efficiency.

Read the full article at arXiv cs.LG (ML)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.