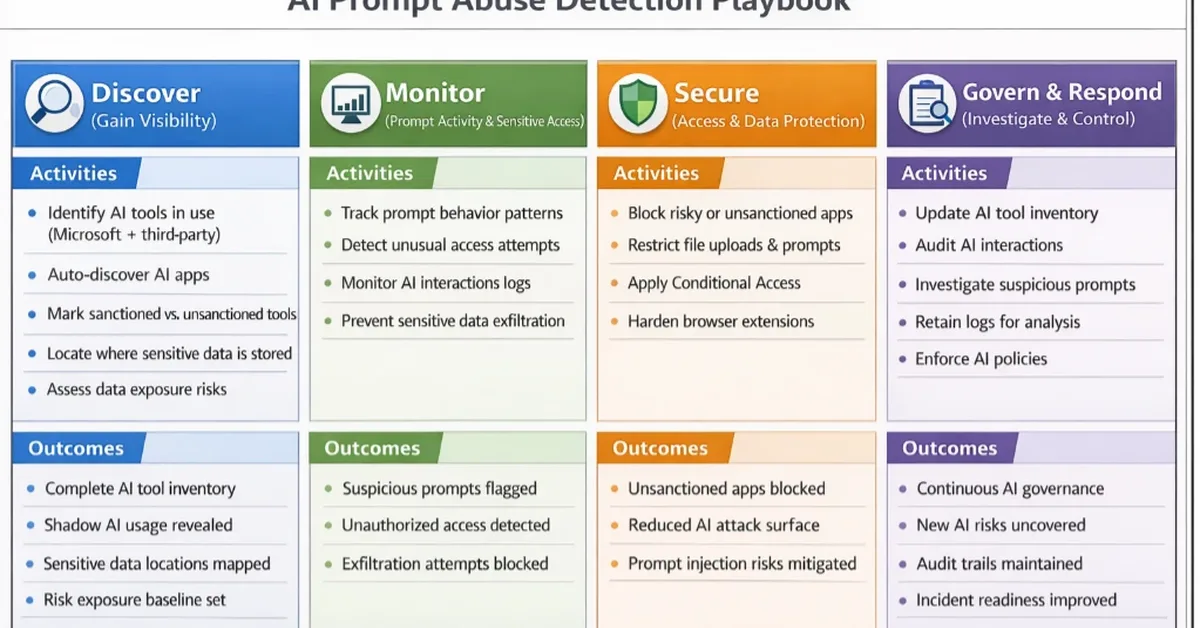

This article discusses strategies for detecting and analyzing prompt abuse in AI tools, focusing on indirect prompt injection techniques that manipulate AI behavior through cleverly crafted inputs like hidden URL fragments. It outlines a playbook for security teams to gain visibility, monitor prompt activity, secure access, investigate and respond to suspicious activities, and maintain continuous oversight. The guidance emphasizes the use of Microsoft's ecosystem tools such as Defender for Cloud Apps, Purview DLP, Entra ID conditional access, and Microsoft Sentinel to detect early signs of manipulation and apply safeguards.

Read the full article at Malware Analysis, News and Indicators - Latest topics

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] GPT 5.4: SOTA Knowledge Work -and- Coding -and- CUA Model, OpenAI is so very back](https://nerdstudio-backend-bucket.s3.us-east-2.amazonaws.com/media/blog/images/articles/c72095eb8e6c4b7f.webp)