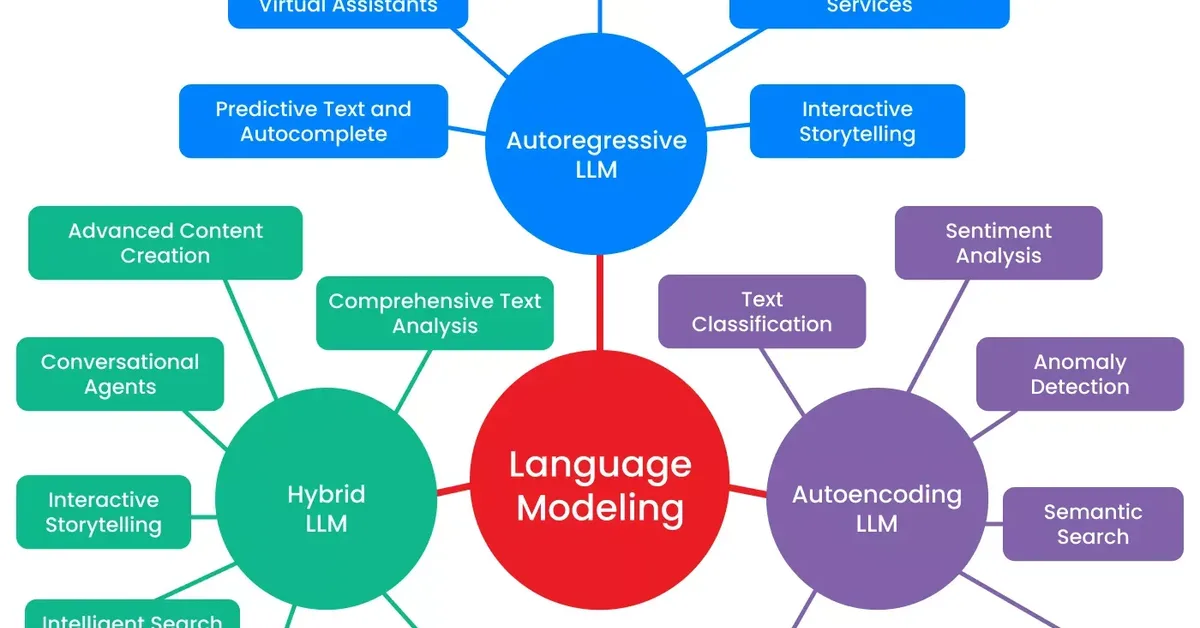

A study on arXiv compares the entropy of large language models (LLMs) to natural language and finds that LLM output has lower word entropy than natural speech or writing. This research aims to quantify information uncertainty in LLM training, particularly when using web-generated data for further training, highlighting implications for content creators regarding model limitations and unpredictability.

Read the full article at arXiv cs.CL (NLP)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.