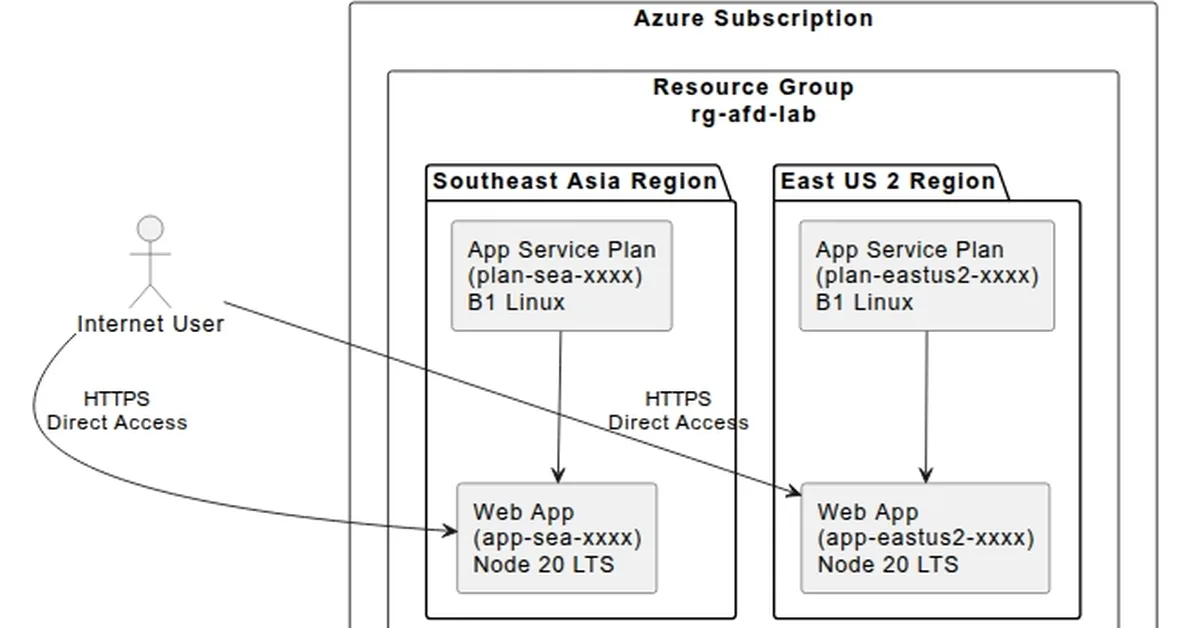

The article discusses transitioning machine learning models from notebook environments to scalable, high-traffic production systems using Kubernetes and Ray for distributed computing. It highlights the importance of feature stores, efficient GPU utilization, and observability tools like Prometheus and Grafana to ensure reliability and cost-effectiveness in AI infrastructure. Key takeaway: Content creators should focus on implementing robust infrastructure components only when necessary to avoid unnecessary complexity and costs.

Read the full article at The New Stack

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.