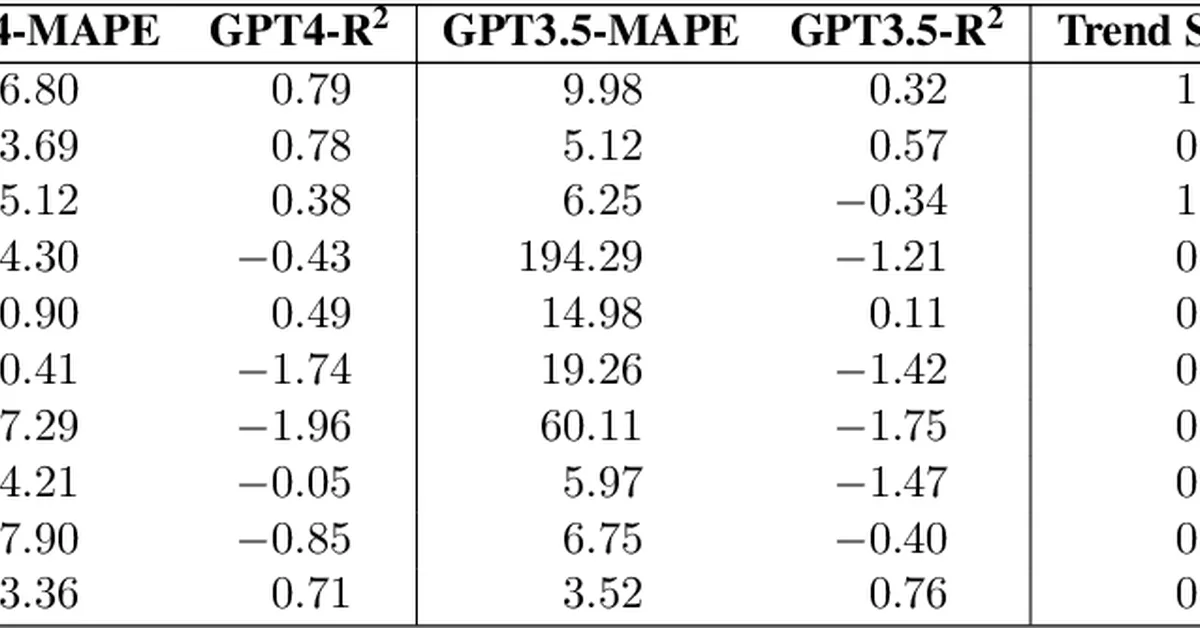

Researchers conducted a study to evaluate the effectiveness of using pre-trained large language models (LLMs) for time series forecasting, finding that while LLMs show some promise, their performance is limited compared to models specifically trained on large-scale time series data. The key takeaway for content creators is the importance of unbiased evaluation methods when assessing the capabilities of LLMs in specialized tasks like time series prediction.

Read the full article at arXiv cs.LG (ML)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.