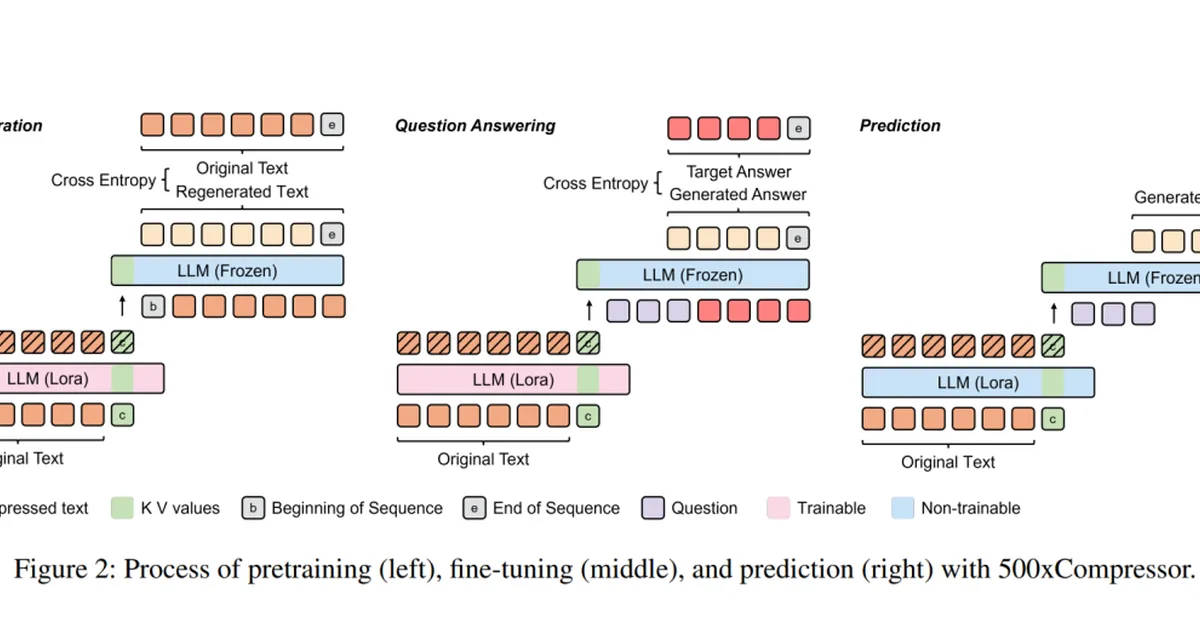

Google unveiled TurboQuant, an AI compression algorithm that can reduce large language models' memory usage by 6x without compromising quality, making these models more accessible and efficient. This advancement is crucial for reducing computational costs and enhancing performance in generative AI applications. Content creators may benefit from faster processing times and lower hardware requirements when using advanced AI tools.

Read the full article at Ars Technica

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The high-return activity of raising your aspirations for LLMs](https://media.nemati.ai/media/blog/images/articles/c3a8e84bb8954ce7.webp)