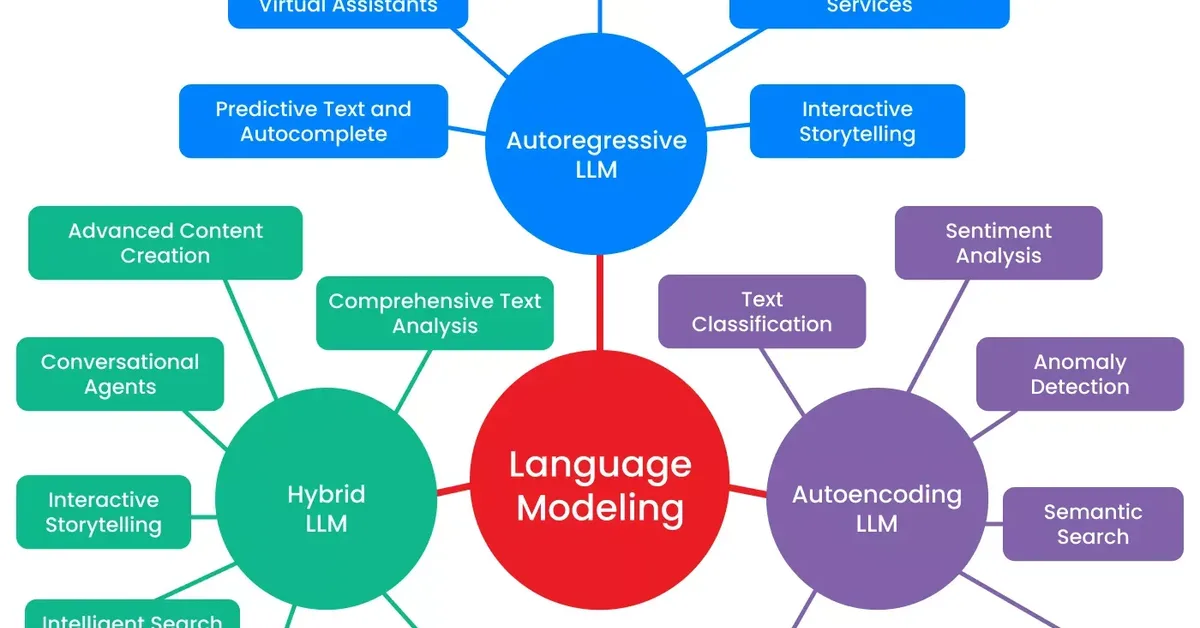

A study challenges the effectiveness of latent visual reasoning in multimodal large language models by identifying critical disconnections between input and latent tokens, as well as between latent tokens and final answers. The research proposes CapImagine, a simpler method that explicitly instructs models to imagine using text, demonstrating superior performance in vision-centric tasks compared to complex latent-space approaches.

Read the full article at arXiv cs.CL (NLP)

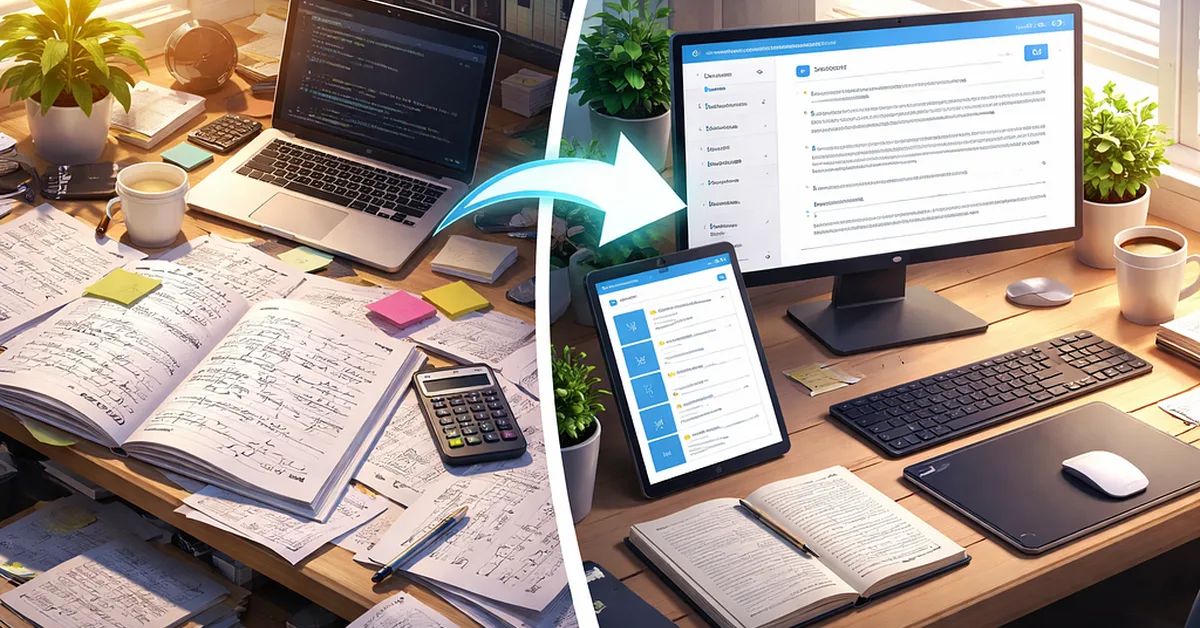

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.