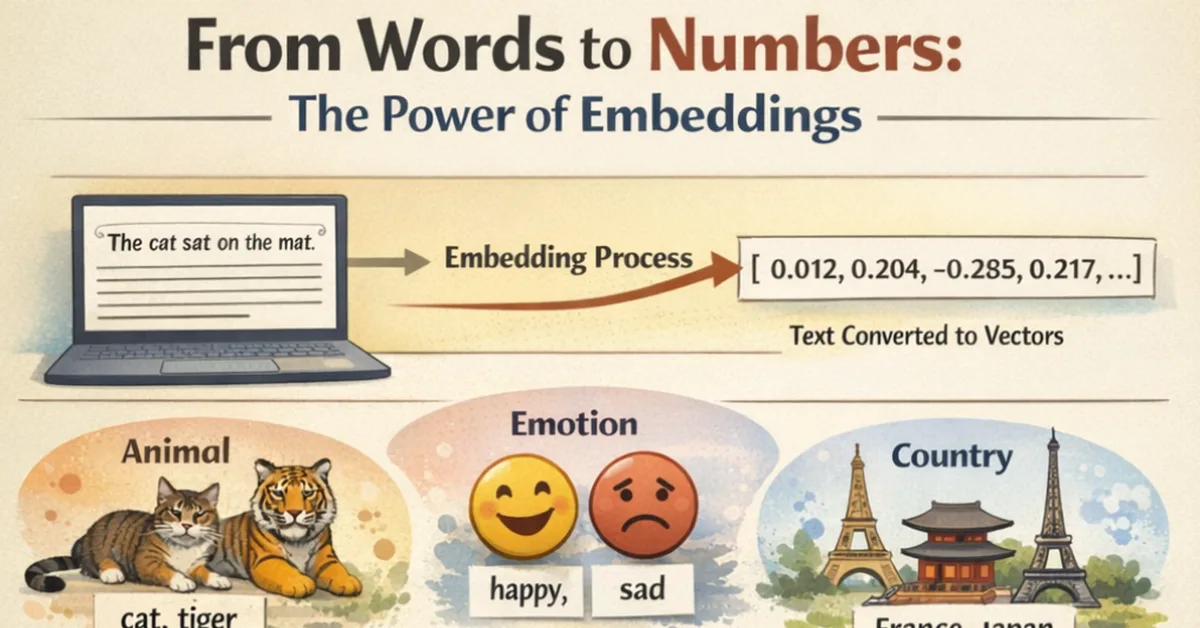

The article explores the process through which AI systems handle language inputs in large language models (LLMs), using a metaphorical "dandle-board" to describe this intricate mechanism. It begins by explaining how raw text is tokenized into smaller units and assigned integer IDs based on a Byte Pair Encoding (BPE) vocabulary, then projected into an embedding space for mathematical manipulation. The process involves computing attention weights across multiple heads in parallel to understand contextual relationships between tokens before projecting the final hidden state back into vocabulary space to predict the next probable token. This entire journey of transforming human language inputs into machine-readable formats and back is encapsulated as a series of dandling actions, highlighting the mathematical elegance underlying AI-generated outputs despite their apparent linguistic complexity. The article also touches on issues related to token economics and how certain languages might face higher computational costs due to biases in training data.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.