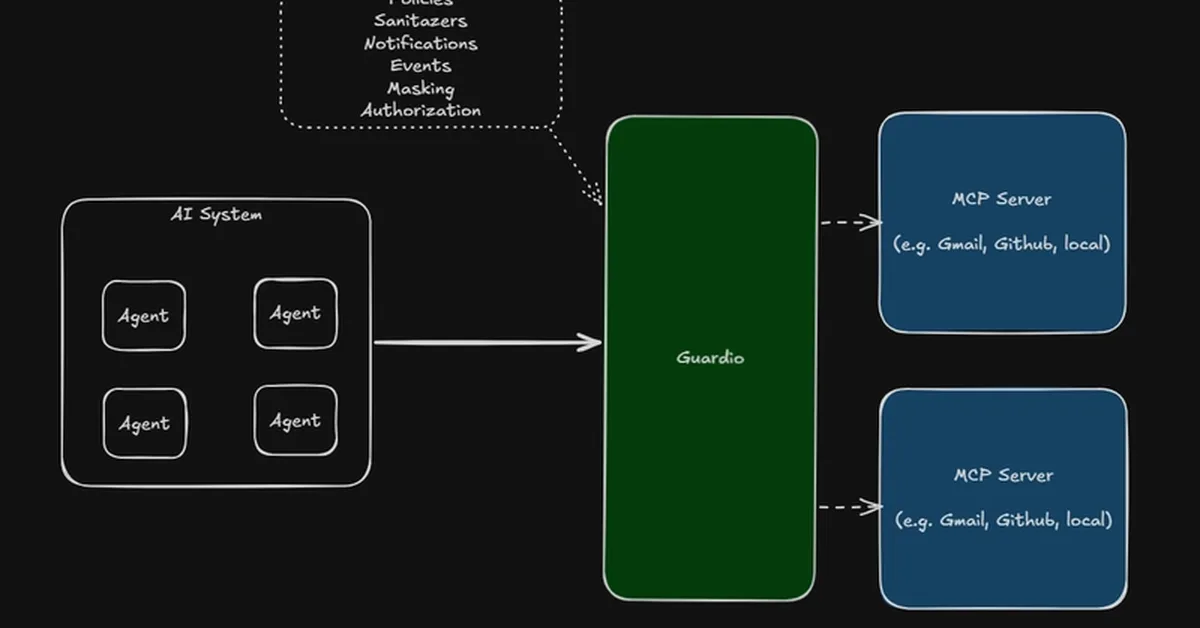

Guardio is a new policy enforcement tool designed to control AI agents' actions by intercepting and evaluating their requests against predefined rules before allowing them to proceed. This matters because it addresses reliability issues in agentic AI, such as unintended destructive actions or lack of audit trails. Content creators can now ensure their AI agents operate safely within specified boundaries without requiring custom code for each project.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.