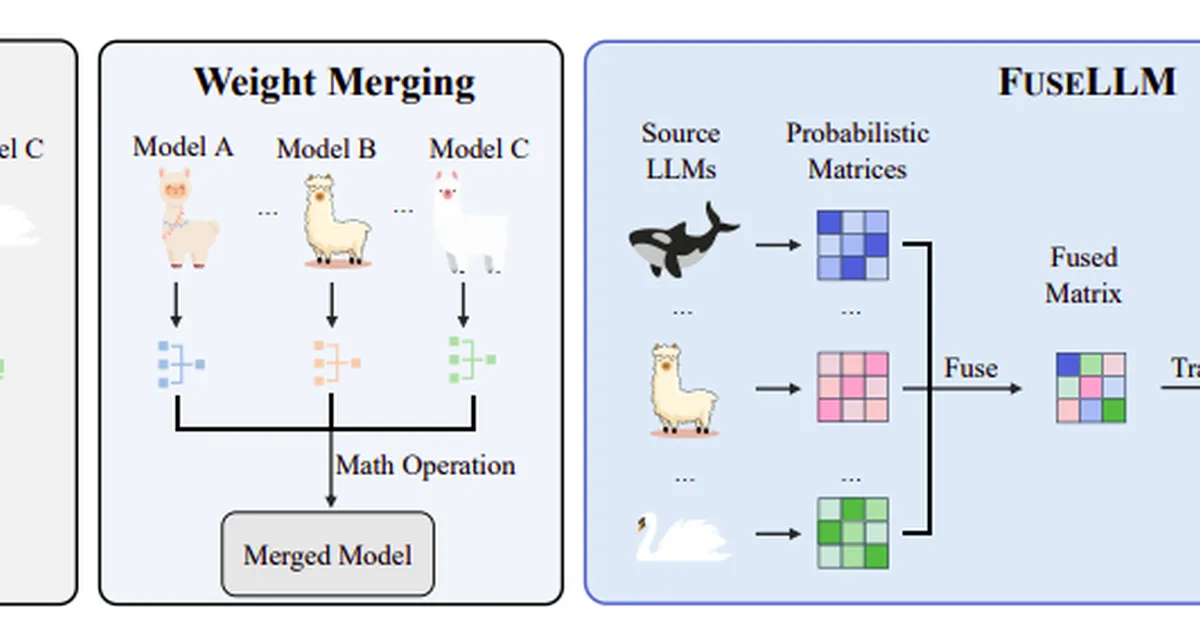

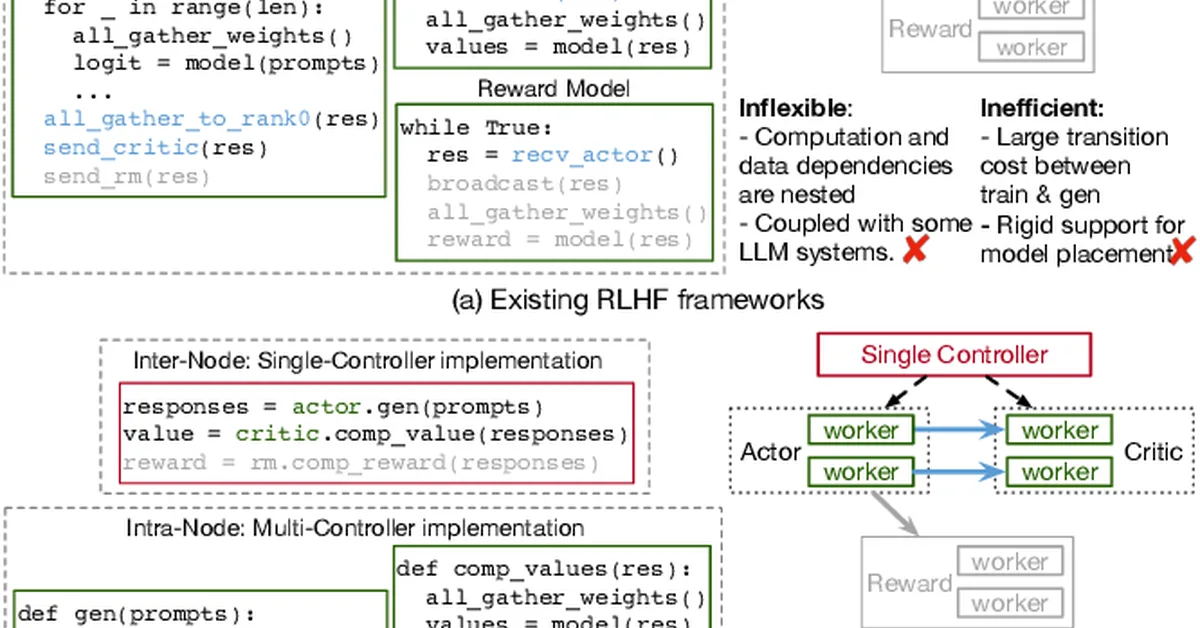

Researchers introduced GraftLLM, a method that uses SkillPack format to store capabilities from source models in target models, addressing challenges in knowledge distillation and continual learning for large language models. This approach enhances efficiency and adaptability by reducing parameter conflicts and supporting forget-free learning, offering content creators a scalable solution for model fusion and integration.

Read the full article at arXiv cs.CL (NLP)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.