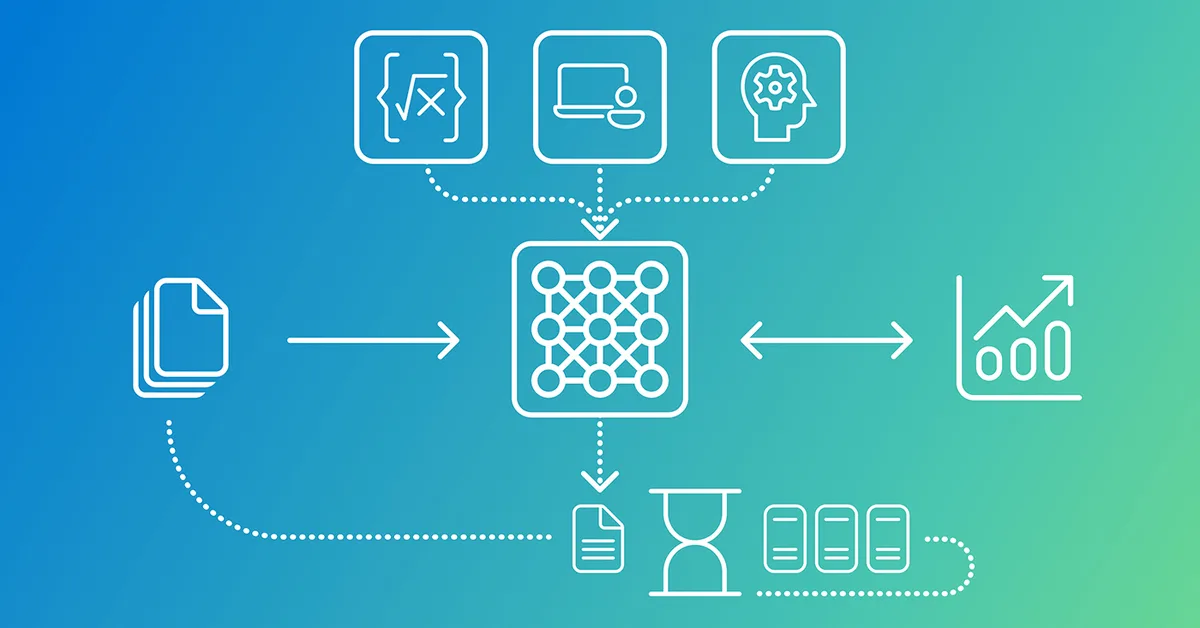

The article discusses a statistical approach for evaluating Large Language Model (LLM) prompts in software development workflows. It emphasizes the importance of using ground truth data to test candidate prompts across multiple trials, moving from anecdotal evidence towards statistical validation. The author outlines phases including building a foundation with known good testing data, finding and refining prompt templates through experimentation, and conducting statistical trials to ensure reliability. Additionally, it explores how different elements like models, hyperparameters, input/output values can be systematically varied while keeping others constant to assess their impact on the performance of prompts. Embracing statistical techniques is crucial for ensuring that LLM-generated code meets quality standards consistently across various scenarios.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.