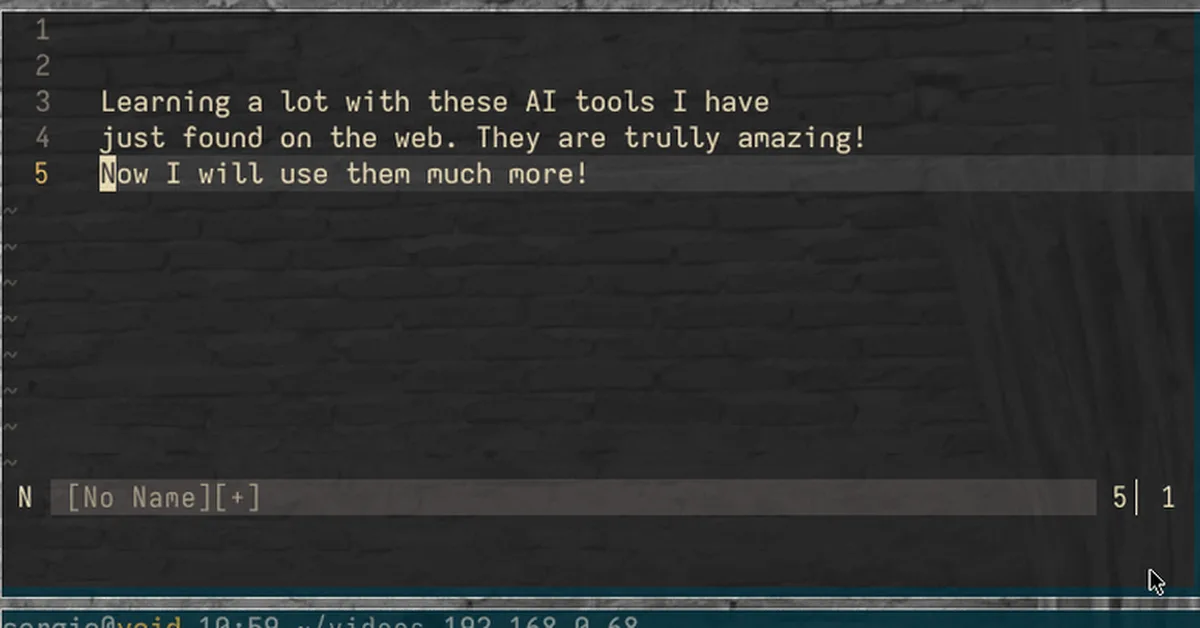

The article demonstrates how persistent memory scaffolding significantly alters Large Language Model (LLM) outputs and reasoning processes, even when using identical prompts and models. This technique injects context that shapes architectural density, design philosophy, and practical experience in responses, offering substantial productivity benefits like reduced ramp-up time and more efficient solutions. Content creators should consider leveraging accumulated contextual memory to enhance the specificity and depth of AI-generated content.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.