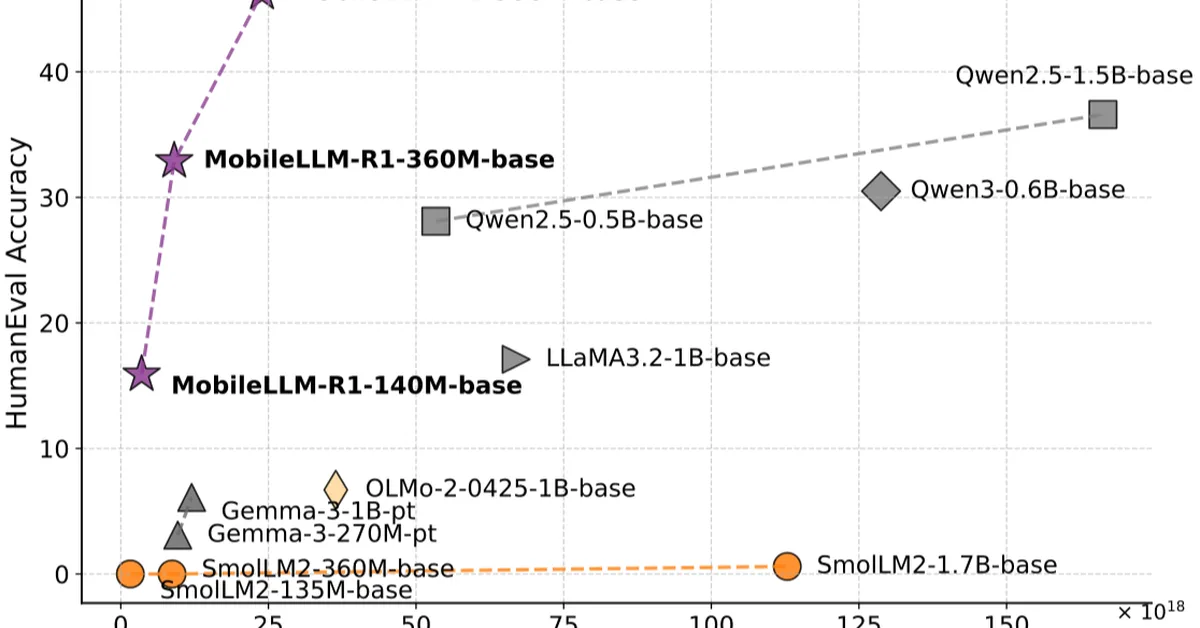

Researchers have developed MobileLLM-R1, a series of sub-billion-parameter reasoning models that demonstrate strong performance using only 2T tokens of high-quality data, challenging the notion that large datasets are essential for effective language model training. This breakthrough is significant for content creators as it suggests more efficient and accessible methods to achieve advanced AI capabilities without relying on vast proprietary datasets.

Read the full article at arXiv cs.CL (NLP)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.