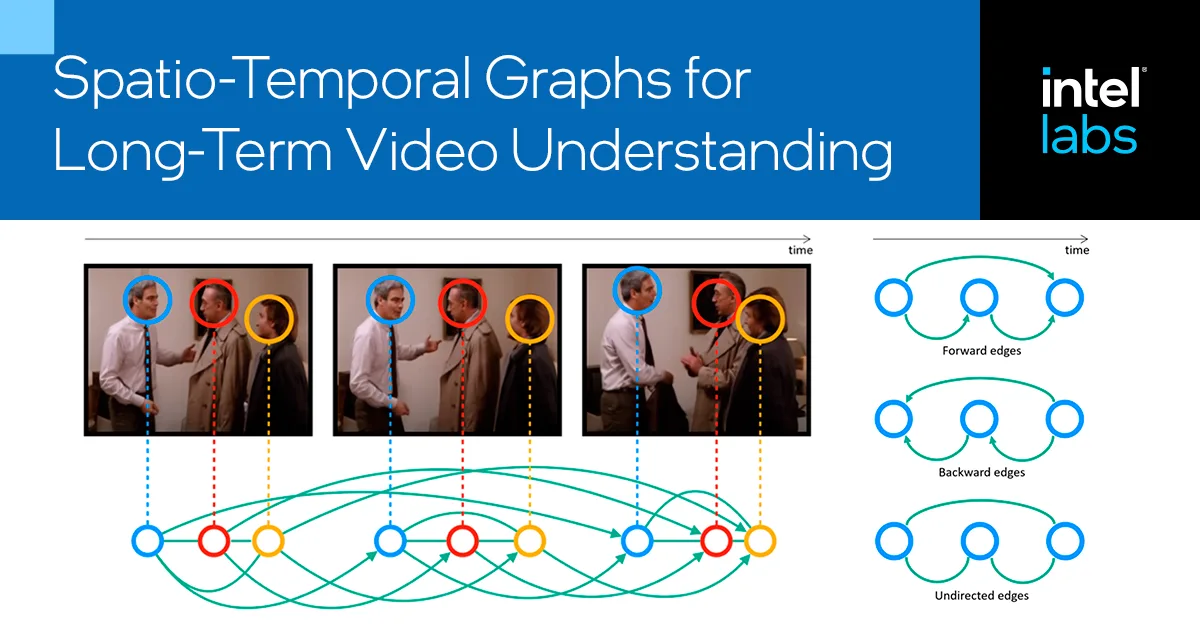

Molmo2 introduces open-source vision-language models with state-of-the-art performance in understanding and grounding videos, using novel datasets and training methods that don't rely on proprietary models. This breakthrough is crucial for content creators as it provides advanced tools for video analysis and interaction without dependency on closed systems.

Read the full article at arXiv cs.AI (Artificial Intelligence)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.