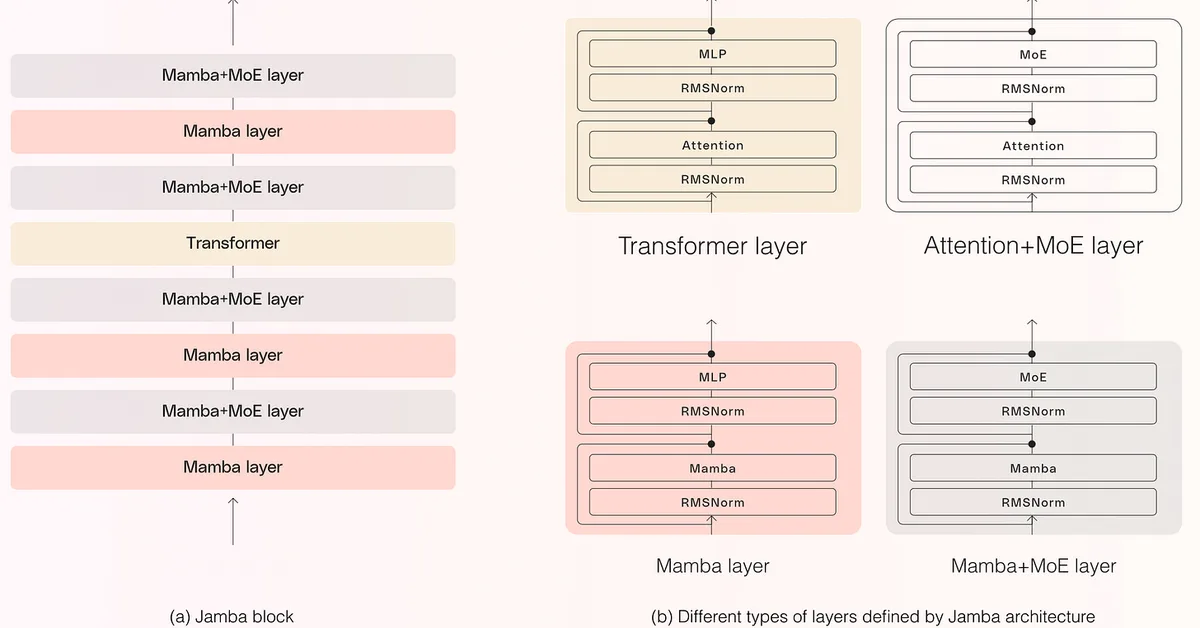

Researchers identified secondary attention sinks in neural network models that differ from primary sinks by appearing in middle layers, persisting variably, and drawing less but significant attention; this finding matters as it reveals new dynamics within model architectures affecting attention mechanisms. Content creators should note these insights could lead to more efficient and nuanced AI tools for text generation and analysis in the future.

Read the full article at arXiv cs.CL (NLP)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.