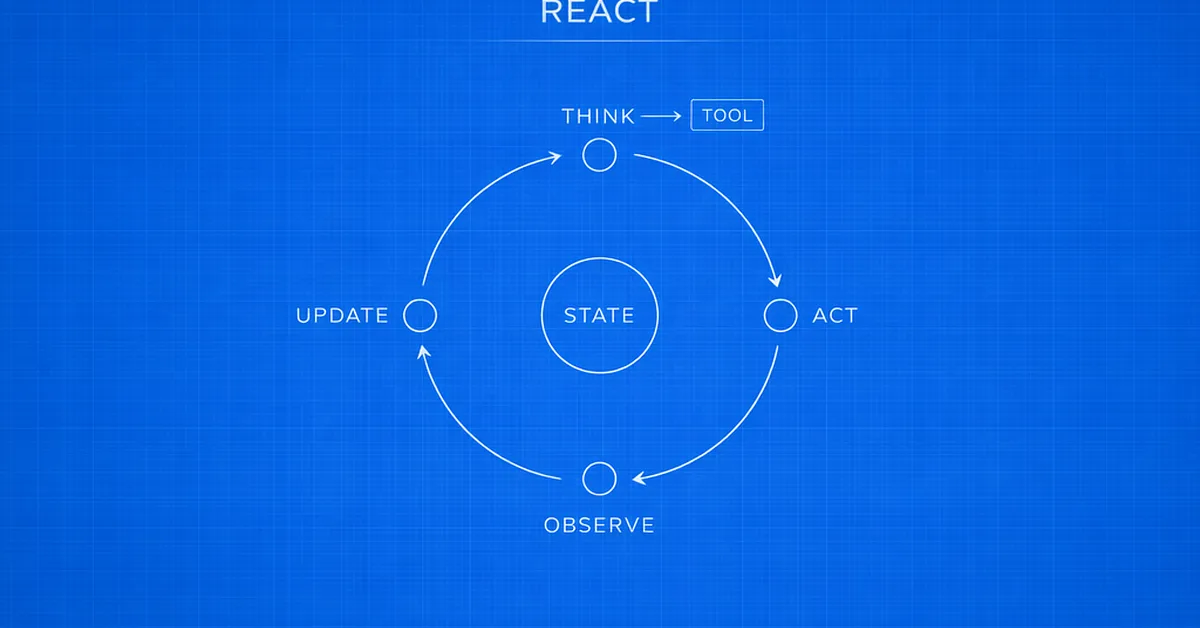

The paper discusses six potential avenues for advancing large language model (LLM) research beyond scaling up existing models: improving performance on out-of-distribution data and reducing hallucinations through techniques like in-context learning; enhancing factual accuracy by incorporating external knowledge sources; mitigating harmful outputs with better alignment methods or content filters; optimizing LLMs to run more efficiently, such as through quantization or low-rank factorization; designing new model architectures that surpass the capabilities of Transformers; and developing alternative hardware for AI beyond GPUs. These approaches aim to address current limitations in LLM performance while exploring innovative solutions for future advancements.

Read the full article at Chip Huyen

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.