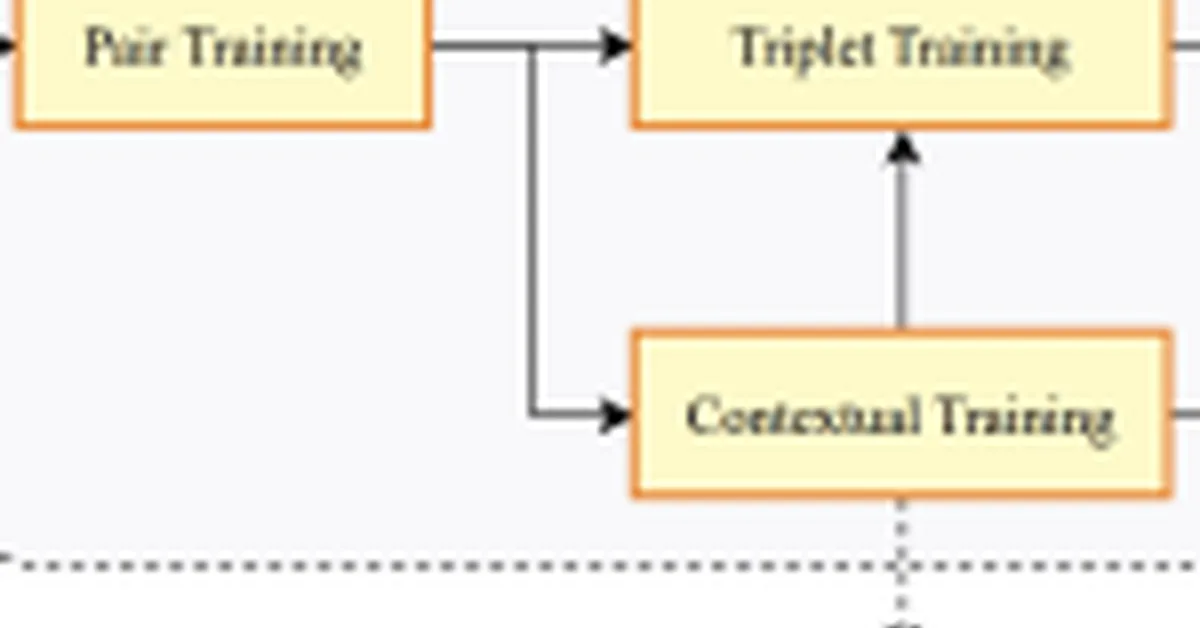

Perplexity released pplx-embed, a set of multilingual embedding models optimized for large-scale retrieval tasks using bidirectional attention and diffusion-based pretraining to handle noisy web data effectively. These models offer specialized versions for queries and document contexts in Retrieval-Augmented Generation (RAG) systems, along with native INT8 quantization for efficient deployment, making them production-ready alternatives to proprietary APIs.

Read the full article at MarkTechPost

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.