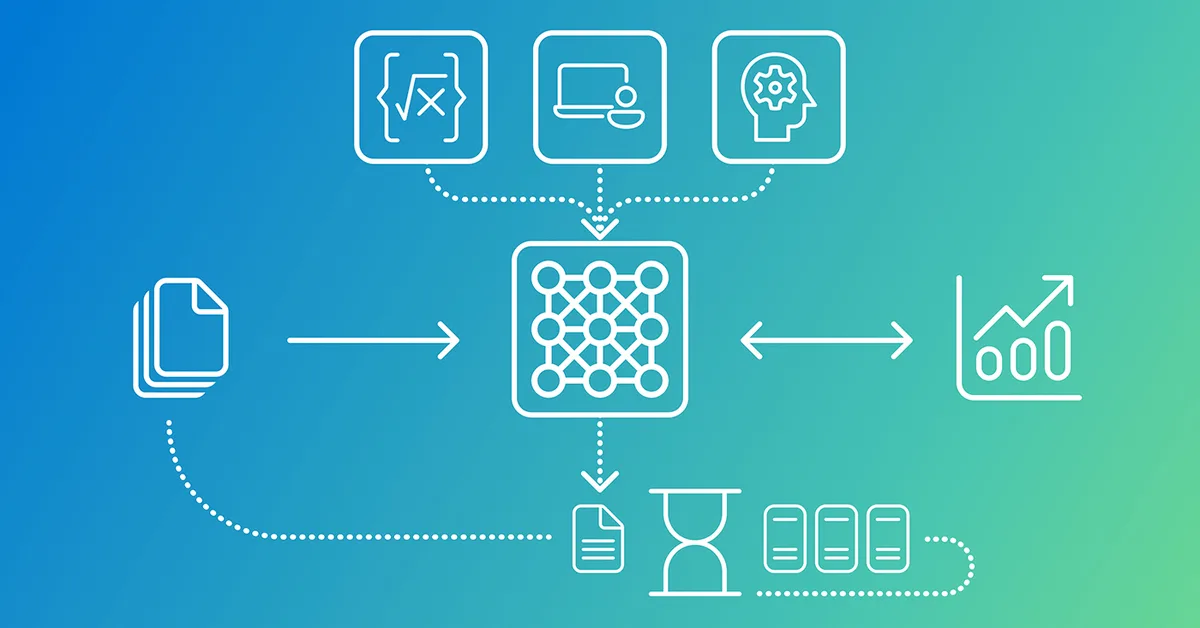

Phi-4-reasoning-vision-15B is a multimodal model designed to balance reasoning capability, inference efficiency, and data requirements by training on a mixed dataset of non-reasoning and reasoning tasks. Key aspects include:

-

Multimodal Mathematics and Science Performance: Increasing mathematics data while keeping computer-use data constant improves performance across math, science, and computer-use benchmarks.

-

Synthetic Data for Text-Rich Visual Reasoning: Programmatically generated synthetic data enhances multimodal reasoning by expanding coverage of underrepresented visual formats.

-

Training Approaches: Phi-4-reasoning-vision-15B starts with a reasoning-capable base (Reasoning LLM) and trains on a mixed dataset, learning when to reason and when to respond directly. This approach avoids the need for extensive multimodal reasoning data and mitigates risks of catastrophic forgetting or weaker reasoning capabilities.

This design allows Phi-4-reasoning-vision-15B to efficiently handle

Read the full article at Microsoft Research

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.