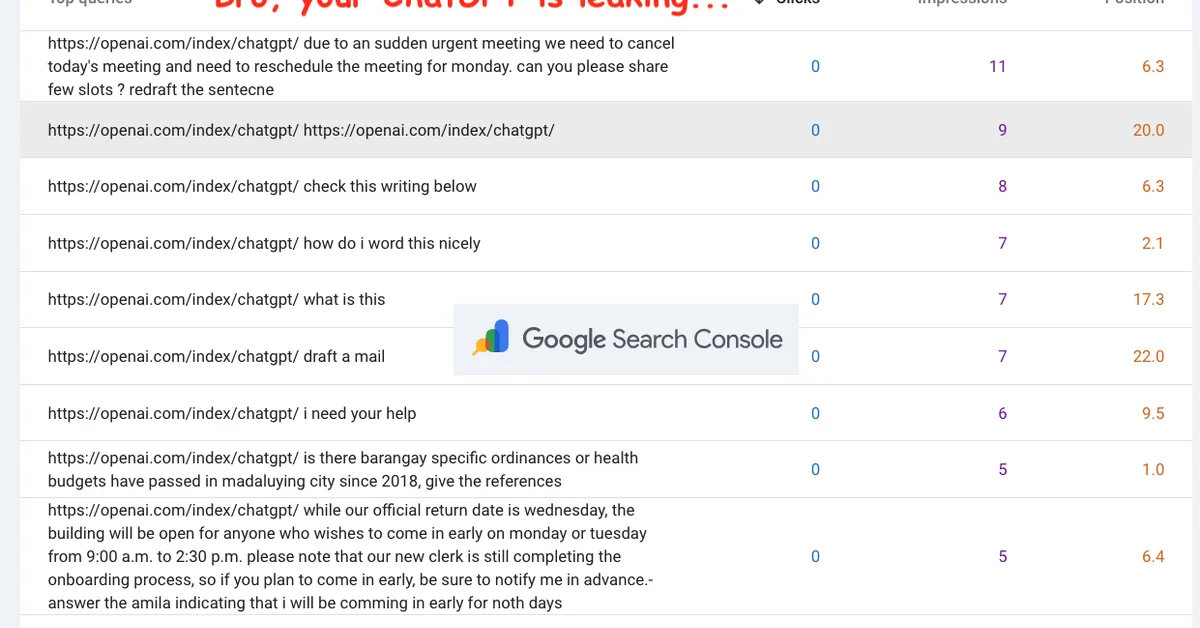

A researcher demonstrated how easily AI training data can be poisoned by creating a fake website about non-existent tech journalists and their hot dog eating skills; within hours, major chatbots like Google's and ChatGPT were repeating this false information verbatim. This highlights the vulnerability of AI systems to misinformation and underscores the need for content creators to critically evaluate sources when using AI tools.

Read the full article at Security Boulevard

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.