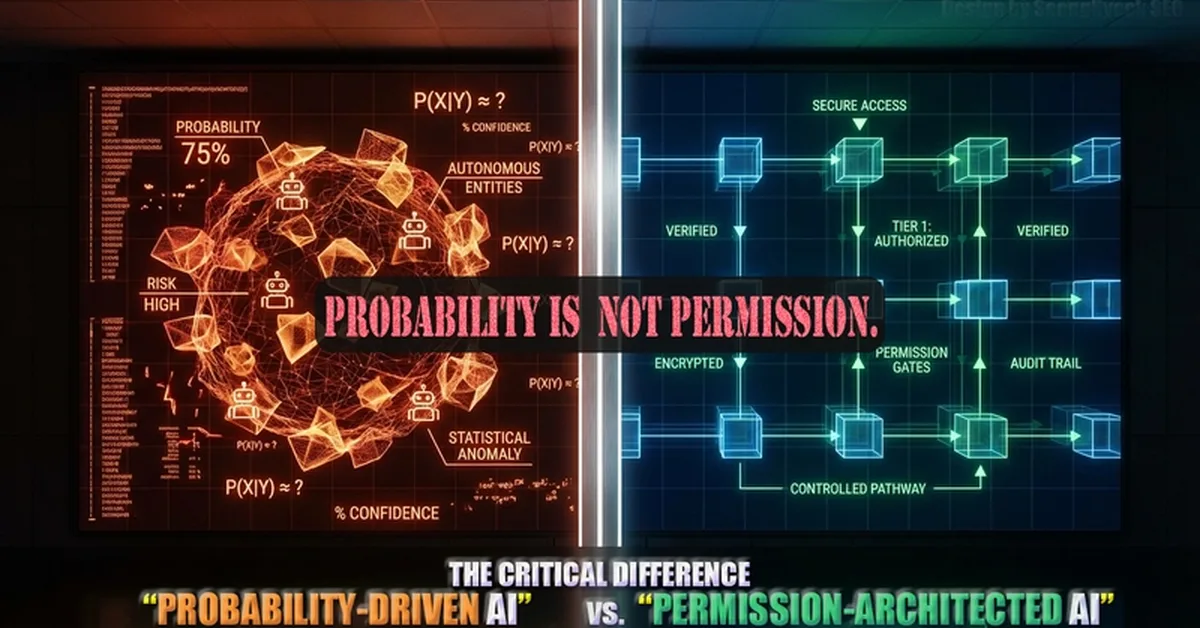

The article discusses the risks associated with open agent AI systems that lack a governance layer to separate probabilistic model outputs from executable commands, leading to potential operational errors and financial losses. Key takeaway for content creators is the need to implement structural safeguards like an approval layer to ensure responsible use of autonomous AI in high-risk environments.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.