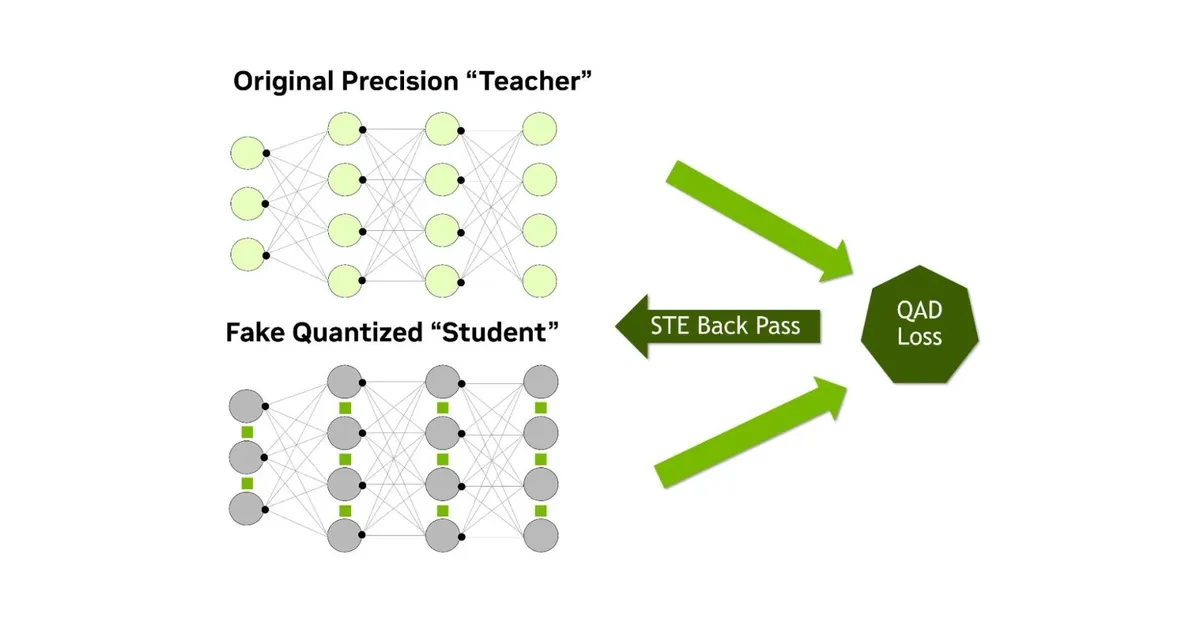

Researchers introduced Q² to address performance degradation in low-bit quantization for complex visual tasks like object detection and image segmentation. The framework includes gradient balancing fusion and attention alignment techniques that stabilize training and accelerate convergence without adding inference-time overhead, offering significant improvements in model accuracy.

Read the full article at arXiv cs.CV (Vision)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.