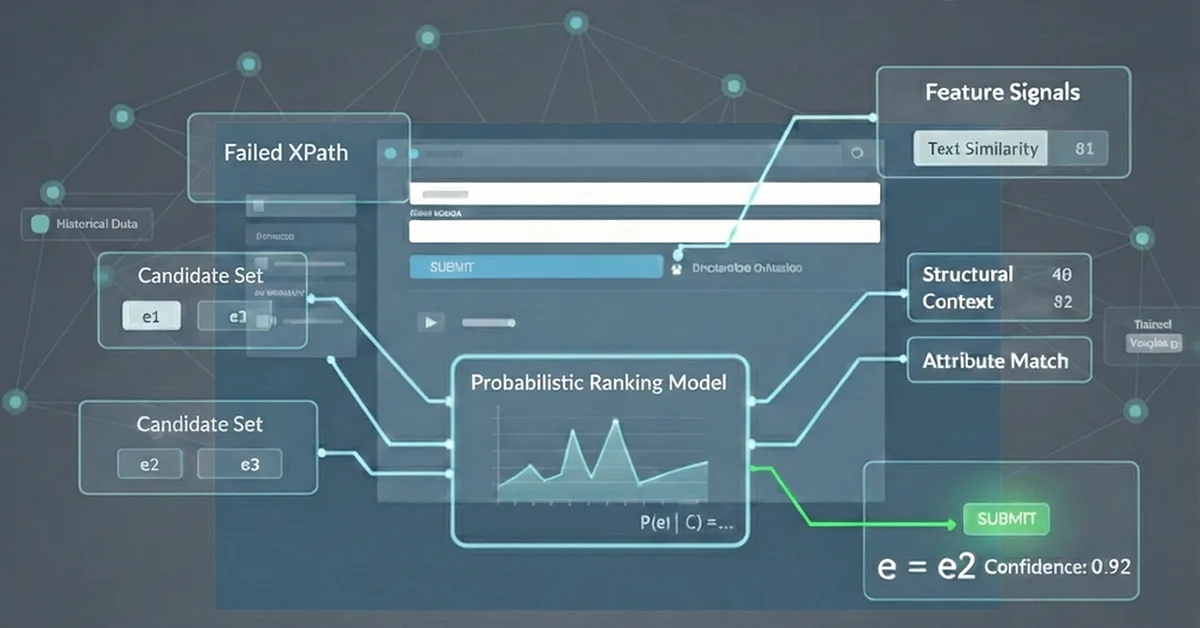

Researchers have developed a real-time system using deep learning to translate sign language gestures into spoken language, facilitating better communication for individuals with hearing and speech impairments. This technology employs convolutional neural networks trained on the Sign Language MNIST dataset and showcases high accuracy and practical usability in everyday settings, offering significant support for enhancing accessibility and social integration for sign language users.

Read the full article at arXiv cs.CV (Vision)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.