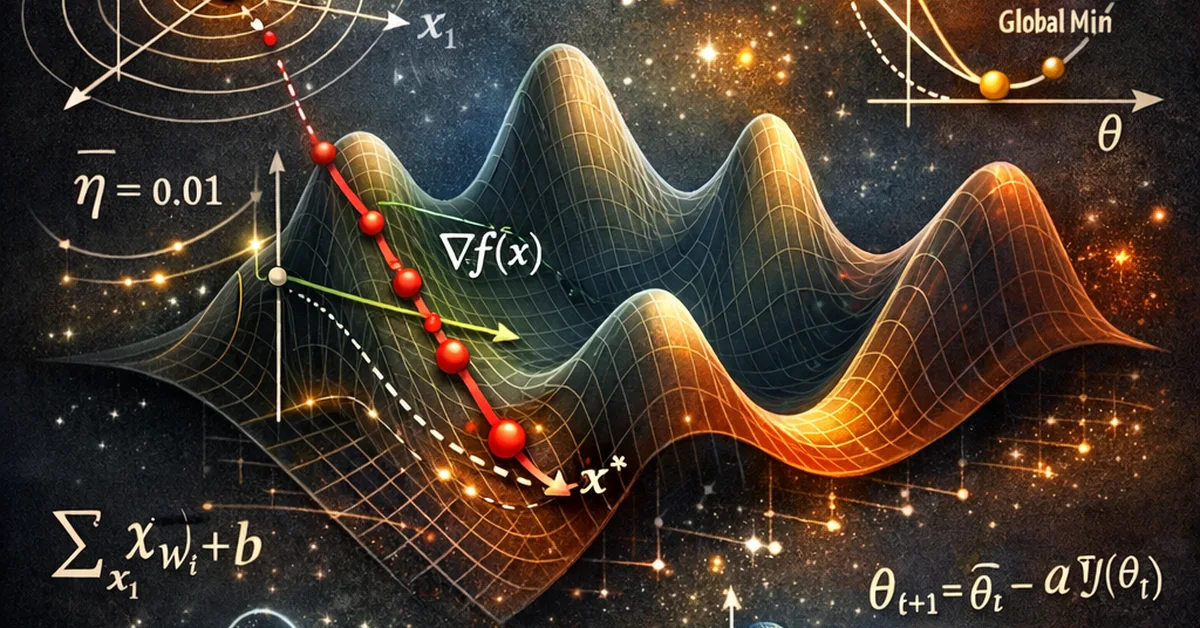

Researchers propose Successive Sub-value Q-learning (S2Q) to enhance multi-agent reinforcement learning by retaining multiple suboptimal actions, allowing for better adaptation to shifting value functions during training. This approach improves adaptability and performance in cooperative MARL scenarios, encouraging continuous exploration and quicker adjustment to changing conditions.

Read the full article at arXiv cs.AI (Artificial Intelligence)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.