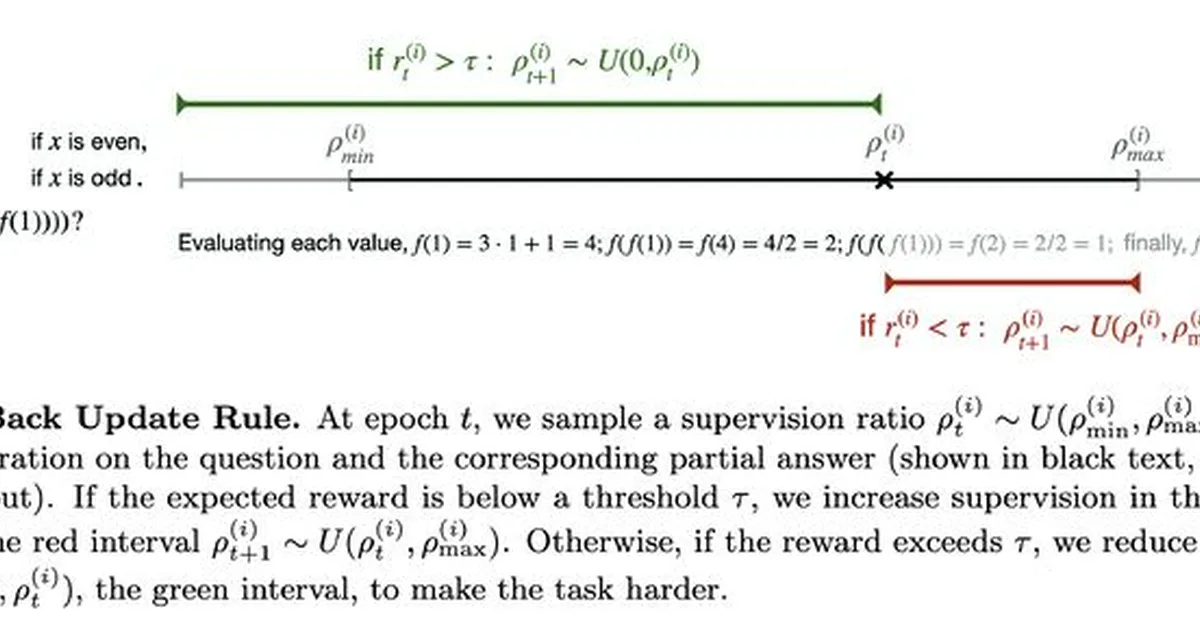

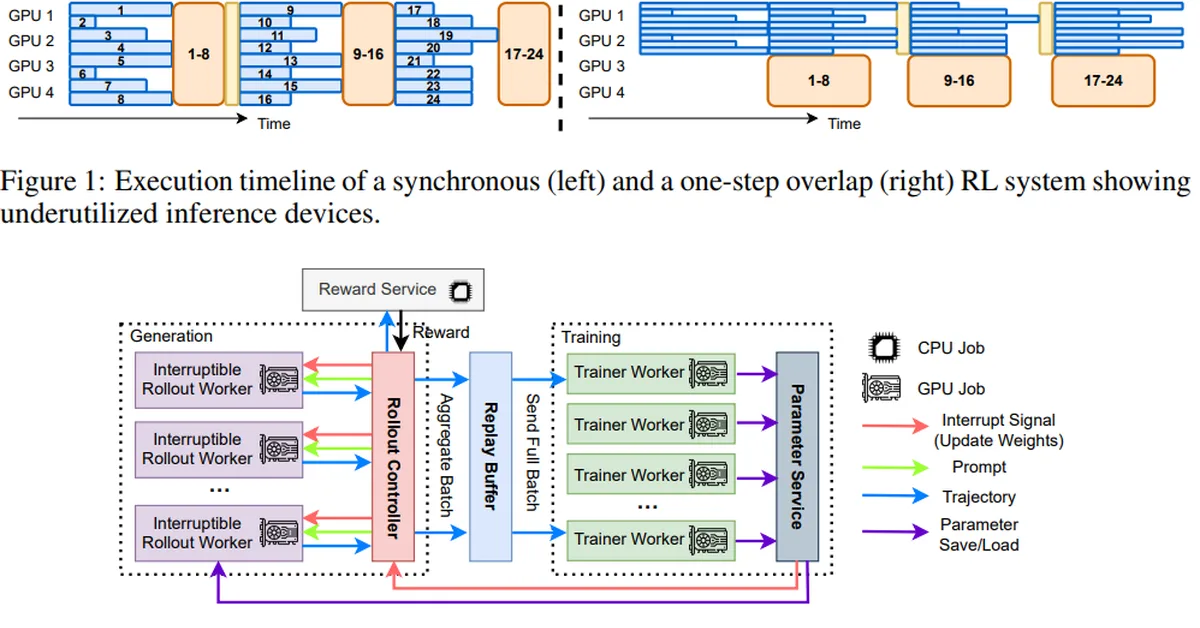

Researchers introduced adaptive backtracking (AdaBack), a curriculum learning algorithm for sequence generation tasks, which reveals partial target outputs based on model performance, enabling efficient learning in problems where both supervised fine-tuning and reinforcement learning fail. This approach allows models to solve complex reasoning tasks with long sequences of latent dependencies that other methods cannot handle, offering content creators a new tool for training AI models on intricate problem-solving scenarios.

Read the full article at arXiv cs.AI (Artificial Intelligence)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.