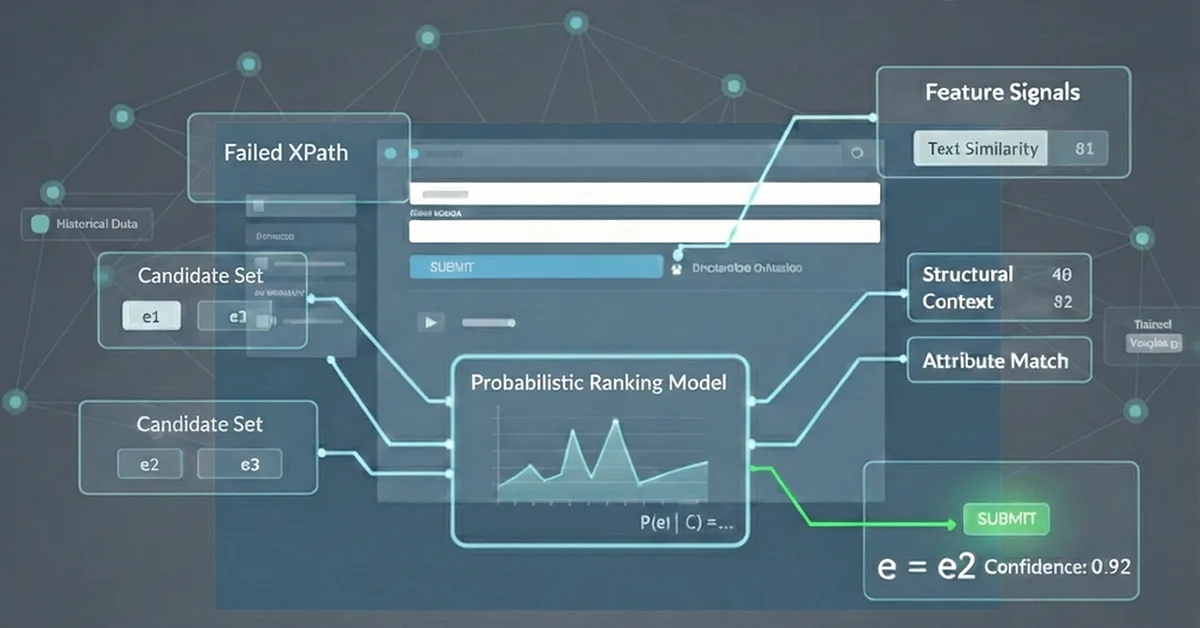

Researchers introduced TextME, a framework that enables zero-shot cross-modal transfer using only text descriptions, bypassing the need for large-scale paired datasets typically required in multimodal learning. This advancement is significant for domains like medical imaging and molecular analysis where expert annotations are costly and scarce, offering content creators a practical alternative to traditional supervised learning methods.

Read the full article at arXiv cs.LG (ML)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.