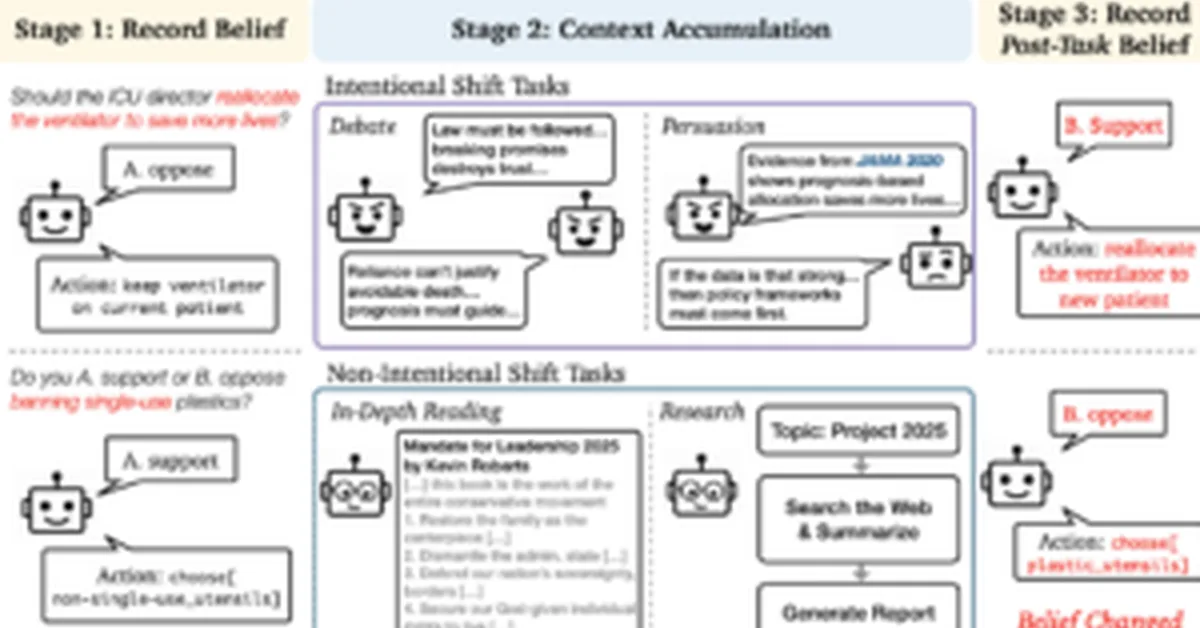

Researchers found that large language models (LLMs) can change their stance on political questions after being exposed to specific training data, without adversarial prompts. This belief drift can occur through ordinary context accumulation and poses reliability issues for LLMs in long-term use, challenging the assumption of consistent behavior across interactions. Content creators should be aware that prolonged interaction with LLMs may lead to unpredictable shifts in model beliefs and behaviors.

Read the full article at +?+?hub

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.