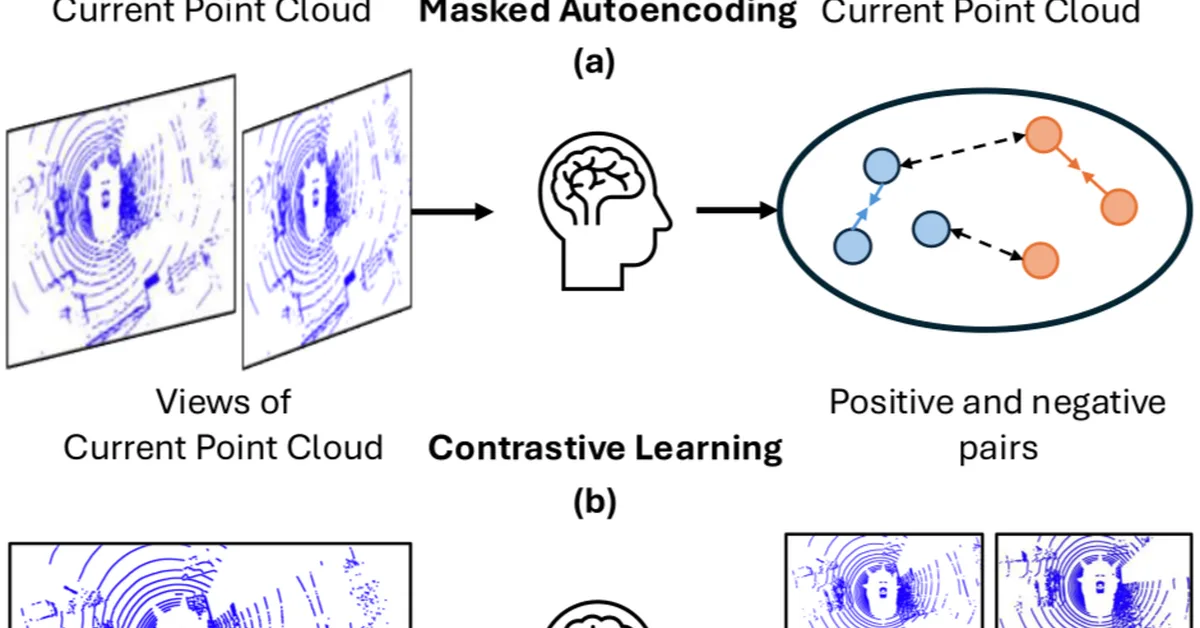

Depth Anything V2 is an advanced model for monocular depth estimation that builds upon the foundational concepts introduced in the MIDAS paper from 2020. It uses a more sophisticated neural network architecture and processes high-resolution images efficiently. The model benefits from extensive training datasets, including synthetic data, to improve its robustness and accuracy across various environments. Key improvements include handling complex scenes with innovative techniques for occlusions, lighting conditions, and textures. Depth Anything V2 demonstrates superior performance in real-world applications like autonomous driving, robotics, AR, and VR by leveraging large-scale unlabeled images and self-training methods to generate pseudo labels. The model's effectiveness is showcased through its ability to produce accurate depth information under diverse conditions, setting new benchmarks on public datasets when fine-tuned with metric depth data from NYUv2 and KITTI.

Read the full article at Paperspace Blog

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.