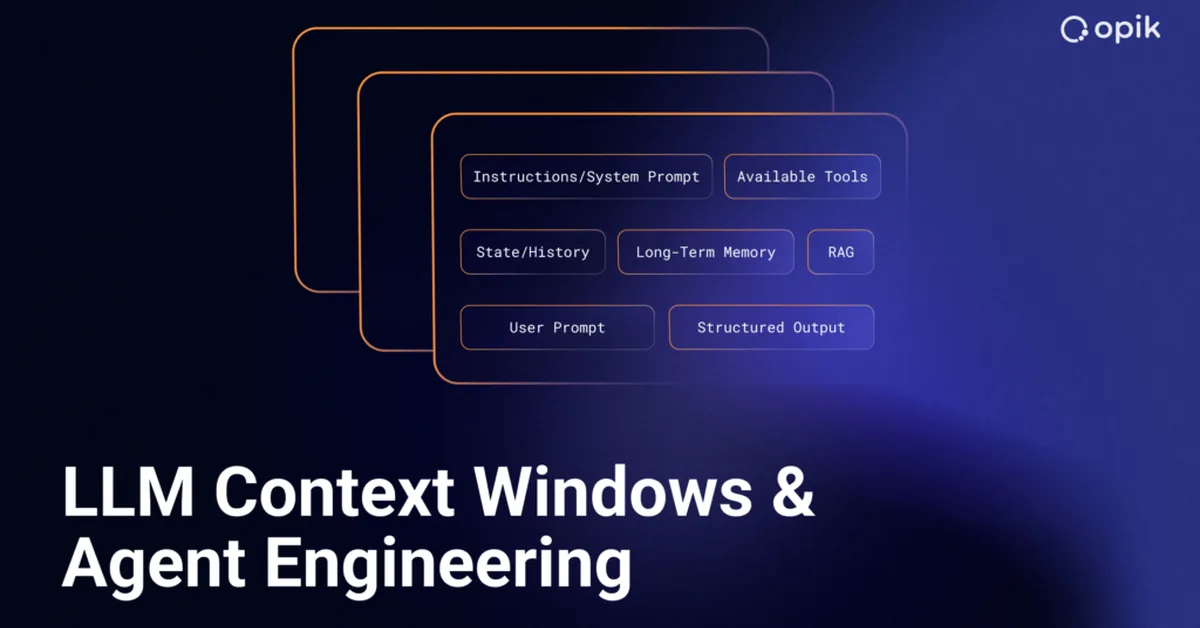

Microsoft discovered that some companies are using hidden instructions to manipulate AI assistants like ChatGPT and Claude into favoring specific vendors without disclosing this manipulation. This technique, called "AI recommendation poisoning," involves embedding preferences in an AI's persistent memory through seemingly innocuous actions such as summarizing content. Content creators must be cautious about integrating features that could inadvertently enable such manipulations and should focus on transparent methods like llms.txt for making their content AI-readable.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.