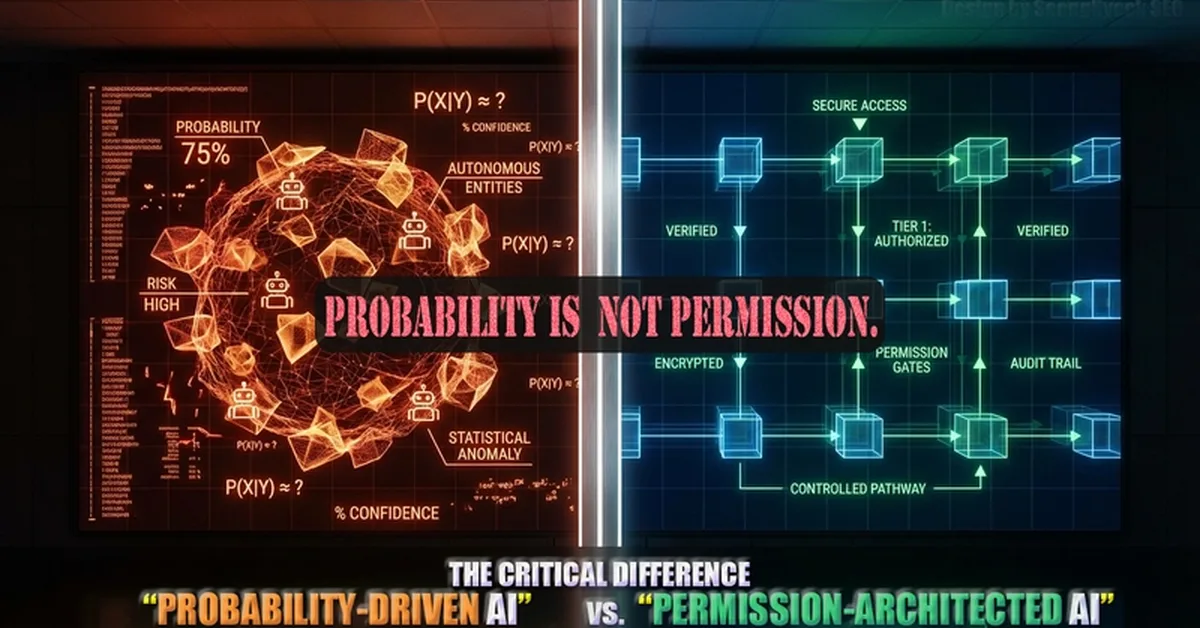

The article highlights that large language models (LLMs) lack inherent memory and require structured management for multi-step workflows to maintain consistency and prevent context drift. Content creators should implement strategies like using external databases as a single source of truth, explicit state passing between steps, and effective context compression techniques to ensure reliable AI automation systems.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] Autoresearch: Sparks of Recursive Self Improvement](https://nerdstudio-backend-bucket.s3.us-east-2.amazonaws.com/media/blog/images/articles/51c7944790fc40ff.webp)