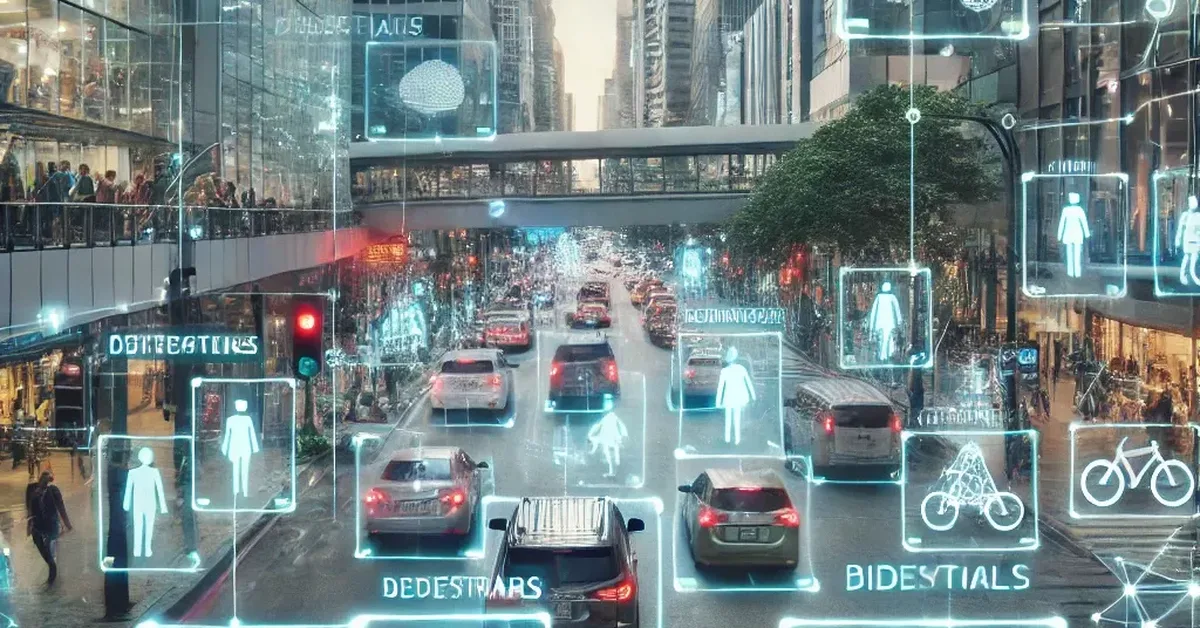

Researchers introduced UDVideoQA, a dataset capturing urban traffic dynamics from 16 hours of real-world footage, to evaluate video language models' spatio-temporal reasoning and privacy preservation capabilities. Key findings highlight a gap between models' abstract inference skills and basic visual understanding, with smaller models showing potential for comparable performance when fine-tuned on UDVideoQA.

Read the full article at arXiv cs.CV (Vision)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.