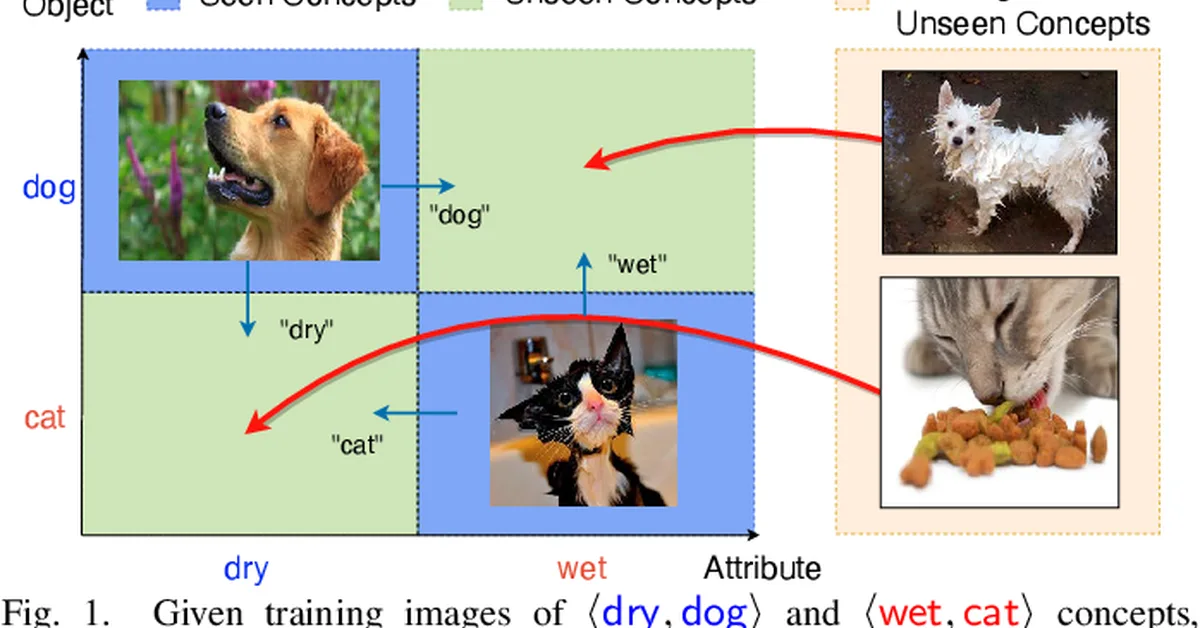

Researchers introduced WARM-CAT, a method for Compositional Zero-Shot Learning that accumulates comprehensive knowledge from unsupervised data to adjust multimodal prototypes during testing, improving adaptability and performance under distribution shift. The approach includes initializing a priority queue with training images and generating unseen visual prototypes, leading to state-of-the-art results on benchmark datasets. Content creators should focus on leveraging comprehensive multimodal knowledge accumulation techniques for better model adaptation in zero-shot learning scenarios.

Read the full article at arXiv cs.CV (Vision)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.