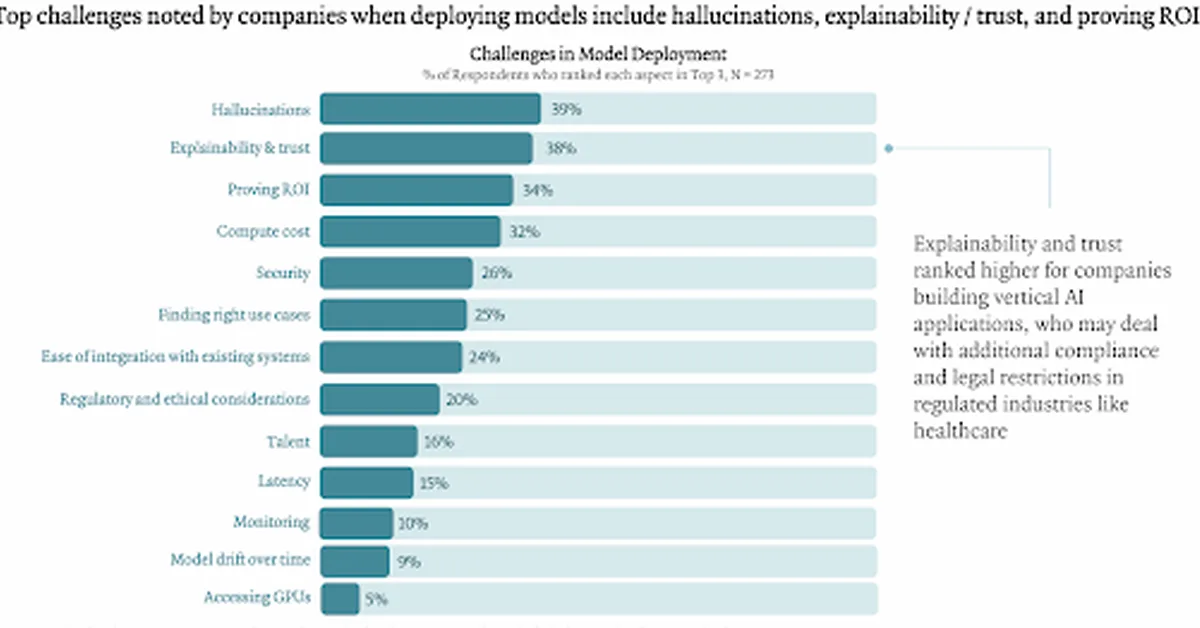

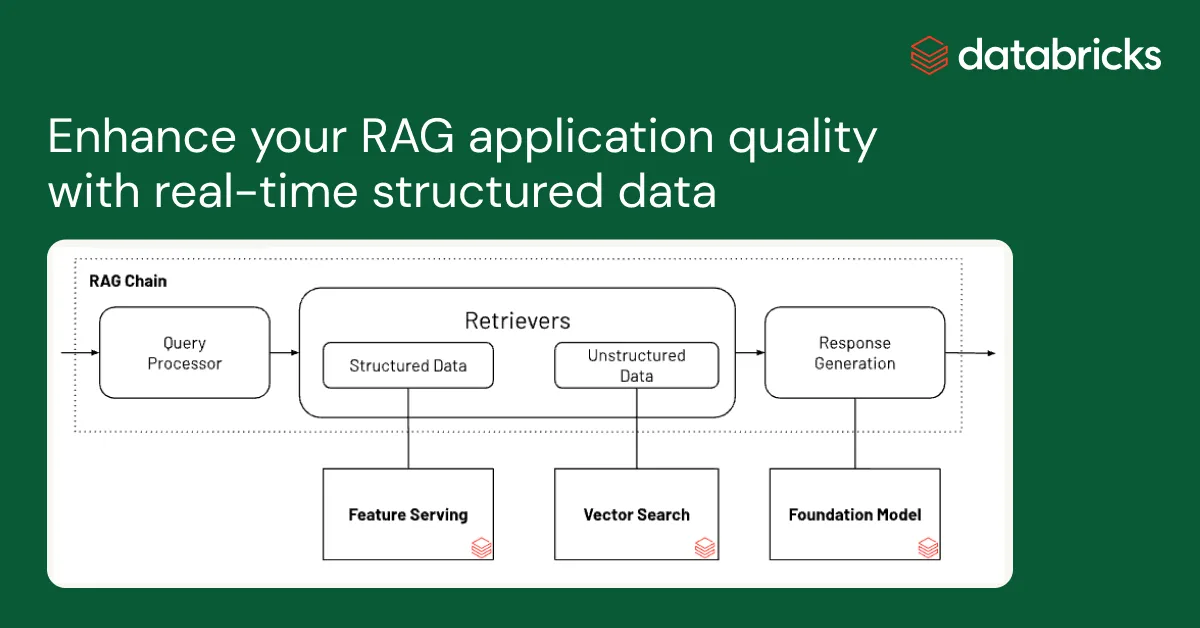

AI hallucinations refer to instances when AI systems generate false information due to their design limitations. These errors can lead to misinformation, economic losses, and safety concerns in critical domains like healthcare. To mitigate these issues, organizations should use high-quality training data, implement architectural strategies like Retrieval-Augmented Generation (RAG), rigorously test models using A/B testing, gold-standard comparisons, and quantitative metrics, and integrate human oversight into AI workflows to ensure reliability and trustworthiness of AI systems.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.