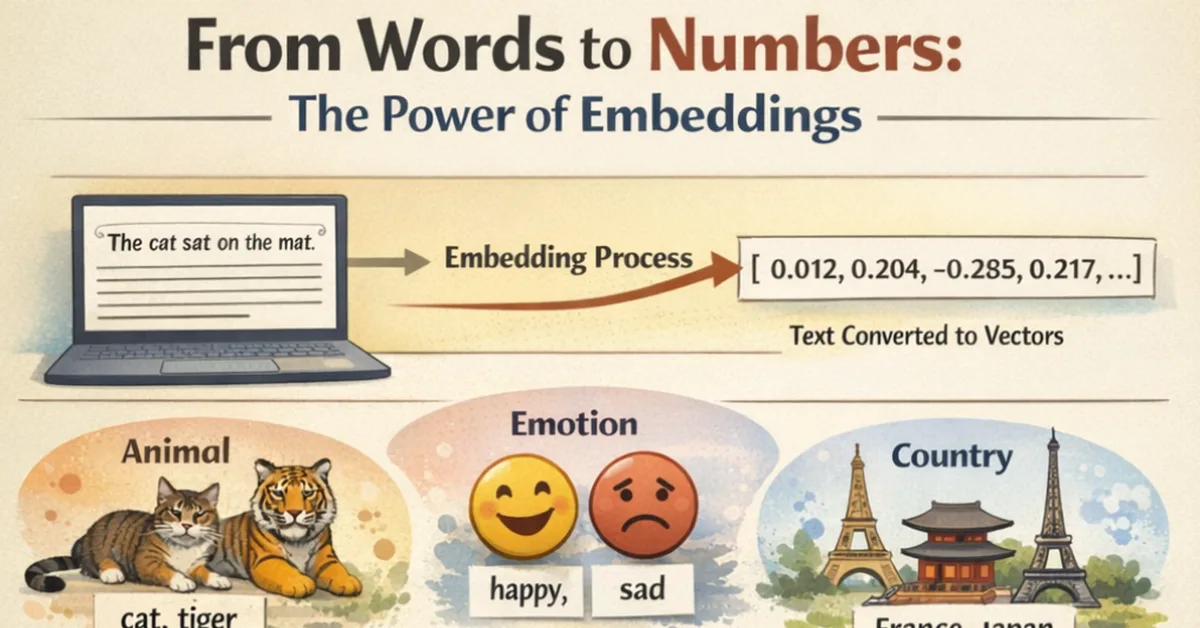

Word embeddings are numerical representations of textual data that capture semantic meaning through geometric relationships in a high-dimensional vector space. They enable machines to understand context and nuances in language by learning from patterns in large datasets. Techniques like Word2Vec assign each word a unique vector based on its usage contexts, allowing for the measurement of similarity between words using cosine similarity or dot product calculations. Modern models such as BERT and GPT generate contextual embeddings that adapt based on surrounding text, overcoming limitations of static embeddings by providing more accurate representations of meaning in context. These advancements are crucial for applications like search engines, bias detection, and multilingual natural language processing systems.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.