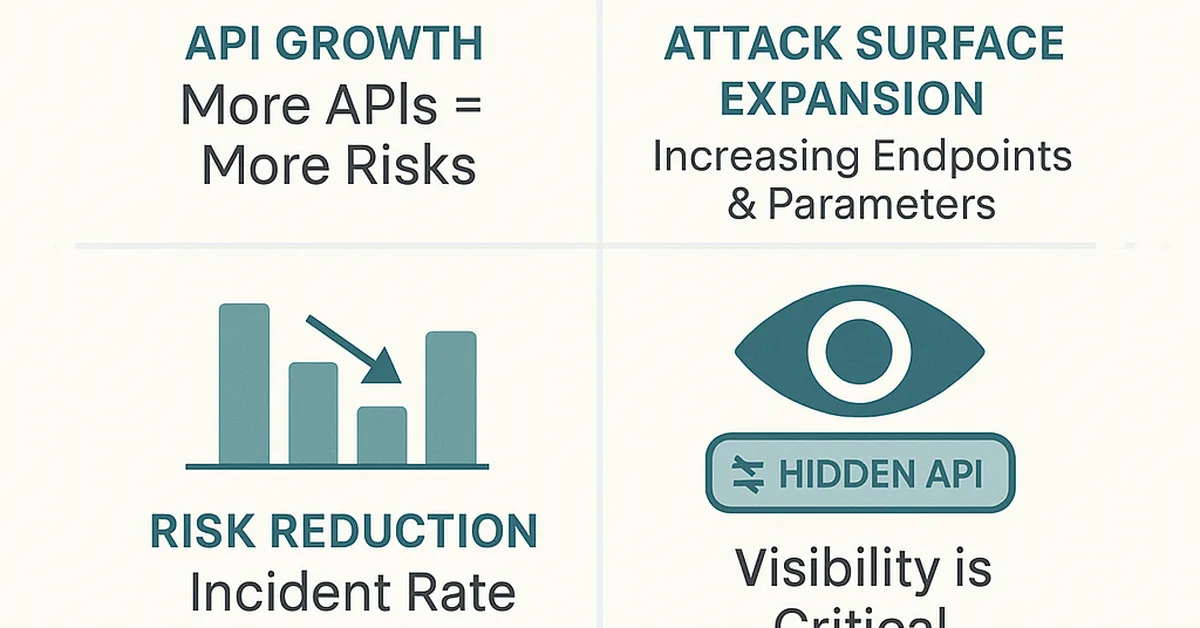

A new study evaluates safety alignment capabilities in large language models (LLMs) and large retrieval models (LRMs), identifying key vulnerabilities and suggesting that integrated reasoning mechanisms enhance model security. The research highlights significant risks associated with certain attack techniques and emphasizes the need for explicit safety constraints during training phases to prevent degradation of safety features, crucial insights for content creators focusing on secure AI development.

Read the full article at arXiv cs.CR (Cryptography & Security)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.