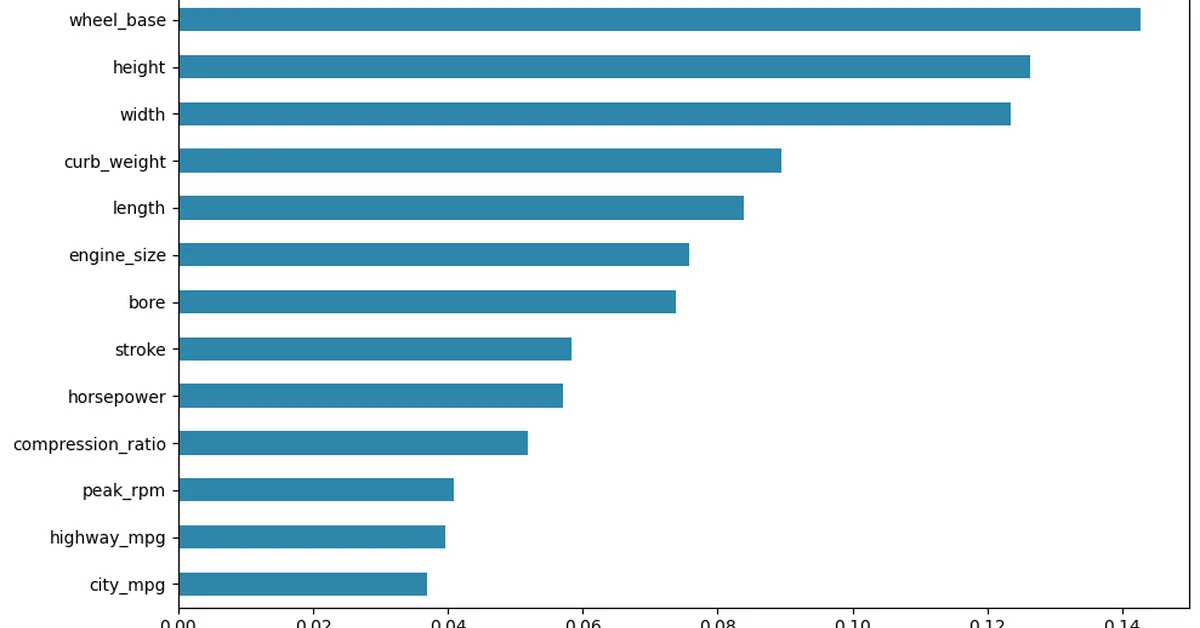

The article discusses common pitfalls in using explainable AI (XAI) techniques like SHAP and LIME to understand defect predictions in manufacturing quality control. It highlights issues such as inconsistent LIME explanations due to low stability across multiple runs, misleading SHAP values when features are highly correlated, and the masking of part-specific patterns by global summary plots. The core problem identified is the lack of ground truth for model explanations, leading to acceptance without verification. A recommended workflow includes measuring explanation consistency, considering feature correlation, segmenting analysis by part categories, and validating explanations against known defect drivers or failure modes.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.