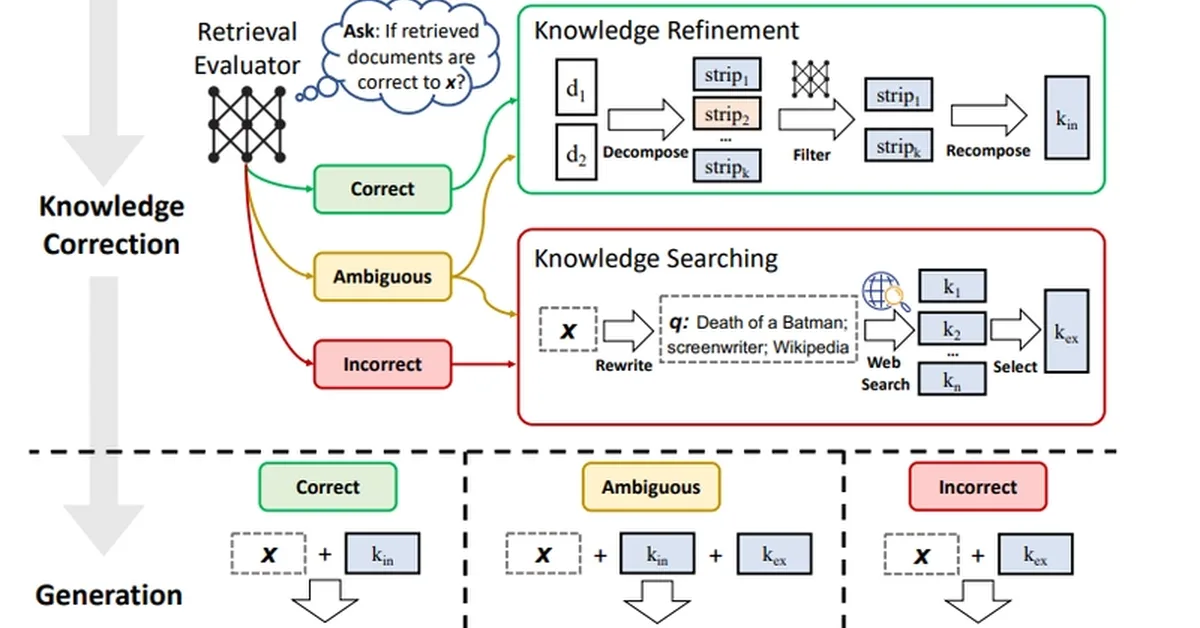

CRAG introduces a retrieval evaluation mechanism for dense retrievers to assess the relevance of retrieved documents based on user queries. It employs a scoring function to determine if the retrieval is relevant, irrelevant, or ambiguous and decides whether to proceed with web search for better results. The method enhances the performance of document retrieval systems by incorporating human-like decision-making processes.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.