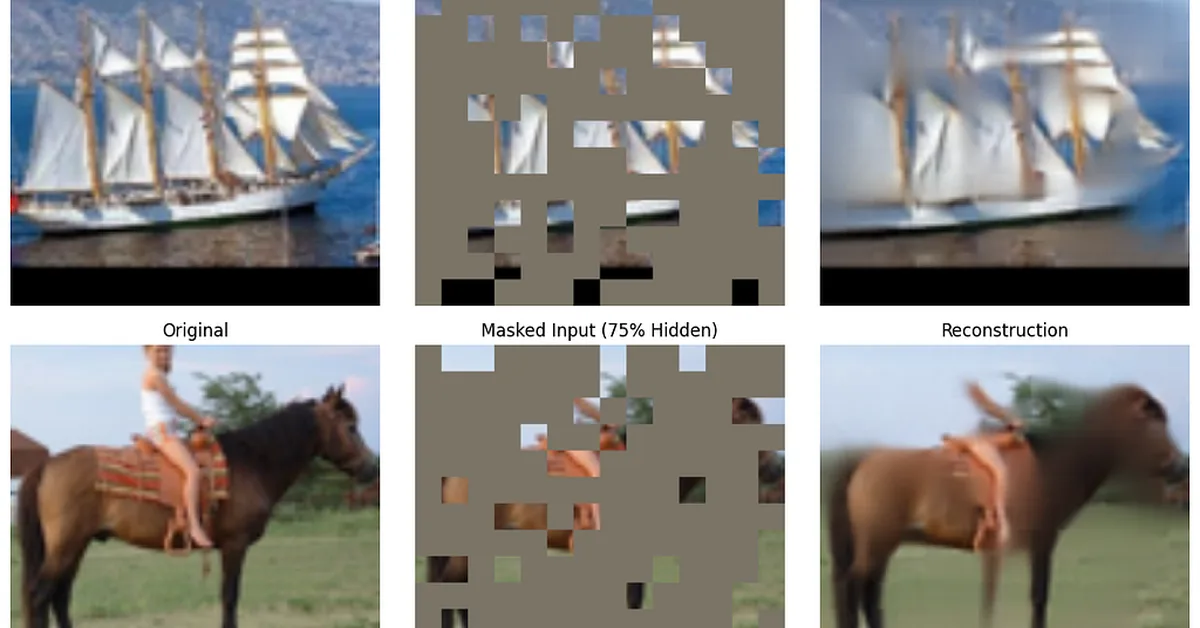

A researcher built a Masked Autoencoder (MAE) from scratch after discovering its effectiveness in self-supervised learning for computer vision tasks without extensive data labeling. By masking 75% of image pixels and training the model to reconstruct them, MAE achieves state-of-the-art performance efficiently on standard GPUs, offering content creators a powerful tool for pre-training large-scale visual models.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.