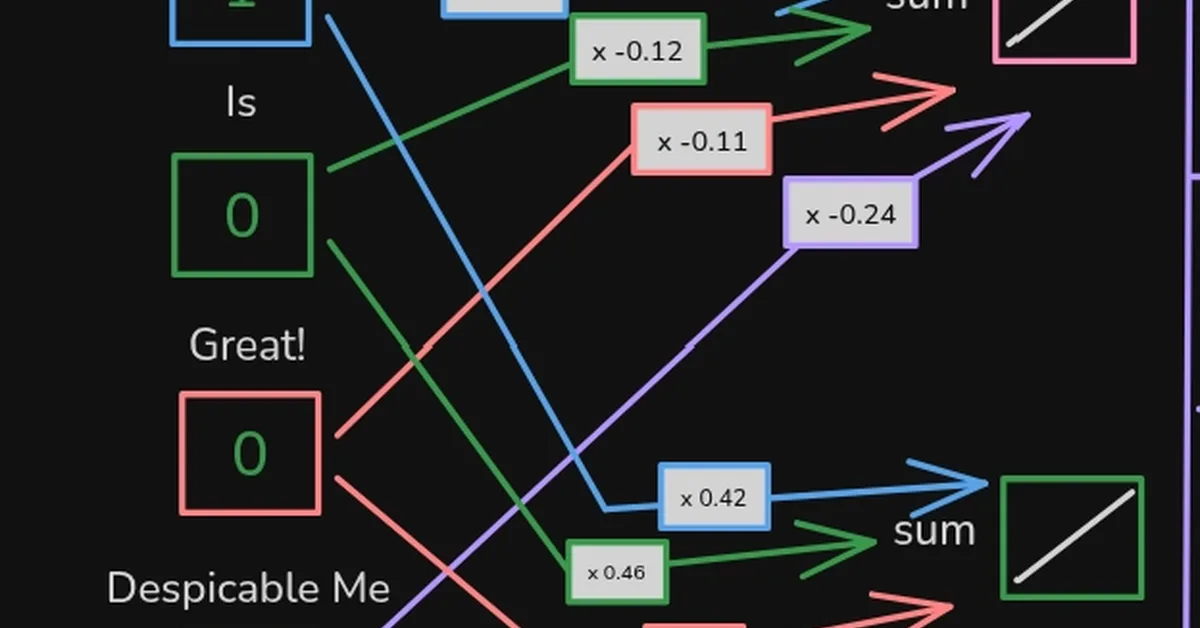

The article discusses how Transformers revolutionized AI by solving limitations of Recurrent Neural Networks (RNNs), enabling parallel processing and understanding long texts effectively. Key takeaway for content creators is understanding Transformer's architecture, including Encoder-only, Decoder-only, and Encoder-Decoder models, to leverage advancements in generative AI tools like ChatGPT.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.